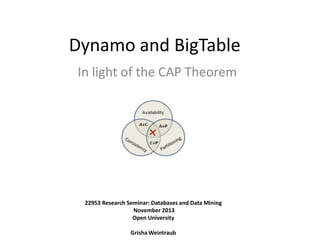

Dynamo and BigTable in light of the CAP theorem

- 1. Dynamo and BigTable In light of the CAP Theorem 22953 Research Seminar: Databases and Data Mining November 2013 Open University Grisha Weintraub

- 2. Overview • Introduction to DDBS and NoSQL • CAP Theorem • Dynamo (AP) • BigTable (CP) • Dynamo vs. BigTable

- 3. Distributed Database Systems • Data is stored across several sites that share no physical component. • Systems that run on each site are independent of each other. • Appears to user as a single system.

- 4. Distributed Data Storage Partitioning : Data is partitioned into several fragments and stored in different sites. Horizontal – by rows. Vertical – by columns. Replication : System maintains multiple copies of data, stored in different sites. Replication and partitioning can be combined !

- 5. Partitioning A B key key value x 10 w 12 value x 5 y 7 z 10 A B 12 5 7 10 12 value w x w key value y C key z 7 z key 5 y value Horizontal Vertical Locality of reference – data is most likely to be updated and queried locally.

- 6. Replication A B key value x x 5 y 7 z 10 10 w 5 7 z value 5 y key x key value 12 C D key value key value y 7 z 10 w 12 w 12 Pros – Increased availability of data and faster query evaluation. Cons – Increased cost of updates and complexity of concurrency control.

- 7. Updating distributed data • Quorum voting (Gifford SOSP’79) : N – number of replicas. At least R copies should be read. At least W copies should be written. R+W > N Example (N=10, R=4, W=7) • Read-any write-all : R=1, W=N

- 8. NoSQL • No SQL : – Not RDBMS. – Not using SQL language. – Not only SQL ? • Flexible schema • Horizontal scalability • Relaxed consistency high performance & availability

- 9. Overview • Introduction to DDBS and NoSQL √ • CAP Theorem • Dynamo (AP) • BigTable (CP) • Dynamo vs. BigTable

- 10. CAP Theorem • Eric A. Brewer. Towards robust distributed systems (Invited Talk) , July 2000 • S. Gilbert and N. Lynch, Brewer’s Conjecture and the Feasibility of Consistent, Available, Partition-Tolerant Web Services, June 2002 • Eric A. Brewer. CAP twelve years later: How the 'rules' have changed, February 2012 • S. Gilbert and N. Lynch, Perspectives on the CAP Theorem, February 2012

- 11. CAP Theorem • Consistency – equivalent to having a single up-to-date copy of the data. • Availability - every request received by a non-failing node in the system must result in a response. • Partition tolerance - the network will be allowed to lose arbitrarily many messages sent from one node to another. Theorem – You can have at most two of these properties for any shared-data system.

- 13. CAP Theorem - Proof x=0 x=0 x=0 x=5 x? 1. x=0 Not consistent 2. No response Not available

- 14. CAP – 2 of 3 Consistency Availability Partition Tolerance • Trivial: – The trivial system that ignores all requests meets these requirements. • Best-effort availability : – Read-any write-all systems will become unavailable only when messages are lost. • Examples : – Distributed database systems, BigTable

- 15. CAP – 2 of 3 Consistency Availability Partition Tolerance • Trivial: – The service can trivially return the initial value in response to every request. • Best-effort consistency : – Quorum-based system, modified to time-out lost messages, will only return inconsistent(and, in particular, stale) data when messages are lost. • Examples : – Web cashes, Dynamo

- 16. CAP – 2 of 3 Consistency Availability Partition Tolerance • If there are no partitions, it is clearly possible to provide consistent, available data (e.g. read-any write-all). • Does choosing CA make sense ? Eric Brewer : – “The general belief is that for wide-area systems, designers cannot forfeit P and therefore have a difficult choice between C and A.“ – “If the choice is CA, and then there is a partition, the choice must revert to C or A. ”

- 17. Overview • Introduction to DDBS and NoSQL √ • CAP Theorem √ • Dynamo (AP) • BigTable (CP) • Dynamo vs. BigTable

- 18. Dynamo - Introduction • • • • Highly available key-value storage system. Provides an “always-on” experience. Prefers availability over consistency. “Customers should be able to view and add items to their shopping cart even if disks are failing, network routes are flapping, or data centers are being destroyed by tornados.”

- 19. Dynamo - API • Distributed hash table : – put(key, context, object) – Associates given object with specified key and context. • context – metadata about the object, includes information as the version of the object. – get(key) – Returns the object to which the specified key is mapped or a list of objects with conflicting versions along with a context.

- 20. Dynamo - Partitioning • Naive approach : – Hash the key. – Apply modulo n (n=number of nodes). key = “John Smith” hash(key) = 19 Adding/deleting nodes totally mess ! 19 mod 4 = 3 1 2 3 4

- 21. Dynamo - Partitioning • Consistent hashing (STOC’97) : – Each node is assigned to a random position on the ring. – Key is hashed to the fixed point on the ring. – Node is chosen by walking clockwise from the hash location. hash(key) A B G C F E D Adding/deleting nodes uneven partitioning !

- 22. Dynamo - Partitioning • Virtual nodes : – Each physical node is assigned to multiple points in the ring.

- 23. Dynamo - Replication • Each data item is replicated at N nodes. • Preference list - the list of nodes that store a particular key. • Coordinator - node which handles read/write operations, typically the first in the preference list. hash(key) A B G N=3 F E C D

- 24. Dynamo - Data Versioning • Eventual consistency(replicas are updated asynchronously) : – A put() call may return to its caller before the update has been applied at all the replicas. – A get() call may return many versions of the same object. • Reconciliation : – Syntactic – system resolves conflicts automatically(e.g. new version overwrite the previous) – Semantic – client resolves conflicts(e.g. by merging shopping cart items).

- 25. Dynamo - Data Versioning • Semantic Reconciliation : Item 1 Item 6 get() { item1, item4}, {item1, item6} put({ item1, item4,item6}) What about delete ?! Item 1 Item 4

- 26. Dynamo - Data Versioning • Vector clocks(Lamport CACM’78) – List of <node, counter> pairs. – One vector clock is associated with every version of every object. – If the counters on the first object’s clock are less-than-or-equal to all of the nodes in the second clock, then the first is an ancestor of the second and can be forgotten. – Part of the “context” parameter of put() and get()

- 27. Dynamo – get() and put() • Two strategies that a client can use : – Load balancer that will select a node based on load information : client does not have to link any code specific to Dynamo in its application. – Partition-aware client library. can achieve lower latency because it skips a potential forwarding step.

- 28. Dynamo – get() and put() • Quorum-like system : R - number of nodes that must read a key. W - number of nodes that must write a key. • put() : Coordinator generates the vector clock for the new version and writes the new version locally. Coordinator sends new version to N nodes. If at least W-1 nodes respond, then write is successful. • get() : Coordinator requests all data versions from N nodes. If at least R-1 nodes respond, returns data to client.

- 29. Dynamo – Handling Failures • Sloppy Quorum and Hinted Handoff : – All read and write operations are performed on the first N healthy nodes. – If node is down, its replica is sent to another node. The data received in this node is called “hinted replica”. – When original node is recovered, the hinted replica is written back to the original node. A B G C F E D

- 30. Dynamo – Handling Failures • Anti-entropy : – Protocol to keep the replicas synchronized. – To detect inconsistencies – Merkle Tree : • Hash tree where leaves are hashes of the values of individual keys. • Parent nodes higher in the tree are hashes of their respective children.

- 31. Dynamo – Membership detection • Gossip-based protocol : – Periodically, each node contacts another node in the network, randomly selected. – Nodes compare their membership histories and reconcile them. A B G C F E D A F BG CE DG EA FB GC

- 32. Dynamo - Summary

- 33. Overview • Introduction to DDBS and NoSQL √ • CAP Theorem √ • Dynamo (AP) √ • BigTable (CP) • Dynamo vs. BigTable

- 34. BigTable - Introduction • Distributed storage system for managing structured data that is designed to scale to a very large size.

- 35. BigTable – Data Model • Bigtable is a sparse, distributed, persistent multidimensional sorted map. • The map is indexed by a row key, column key, and a timestamp. • (row_key,column_key,time) string

- 36. BigTable – Data Model user_id phone 15 row_key name John 178145 (29, name, t2) “Robert” user_id column_key name 29 Bob Robert email t1 bob@gmail.com t2 timestamp user_id RDBMS Approach name phone email 15 John 178145 null 29 Bob null bob@gmail.com

- 37. BigTable – Data Model • Columns are grouped into Column Families: – family : optional qualifier Column Family Optional Qualifier user_id name: contactInfo : phone contactInfo : email 15 John 17814552 john@yahoo.com RDBMS user_id Approach 15 name user_id type value John 15 phone 178145 15 email john@yahoo.com

- 38. BigTable – Data Model • Rows : – The row keys in a table are arbitrary strings. – Every read or write of data under a single row key is atomic. – Data is maintained in lexicographic order by row key. – Each row range is called a tablet, which is the unit of distribution and load balancing. row_key user_id name: contactInfo : phone contactInfo : email 15 John 178145 john@yahoo.com

- 39. BigTable – Data Model • Column Families : – Column keys are grouped into sets called column families. – A column family must be created before data can be stored under any column key in that family. – Access control and both disk and memory accounting are performed at the column-family level. column_key user_id name: contactInfo : phone contactInfo : email 15 John 178145 john@yahoo.com

- 40. BigTable – Data Model • Timestamps : – Each cell in a Bigtable can contain multiple versions of the same data; these versions are indexed by timestamp. – Versions are stored in decreasing timestamp order. – Timestamps may be assigned : • by BigTable(real time in ms) • by client application – Older versions are garbage-collected. user_id 29 name Bob Robert email t1 t2 timestamp bob@gmail.com

- 41. BigTable – Data Model • Tablets : – Large tables broken into tablets at row boundaries. – Tablet holds contiguous range of rows. – Approximately 100-200 MB of data per tablet. id 15000 …. ….. ….. 20001 Tablet 2 ….. 20000 Tablet 1 ….. ….. …. ….. 25000 …..

- 42. BigTable – API • Metadata operations : – Creating and deleting tables, column families, modify access control rights. • Client operations : – Write/delete values – Read values – Scan row ranges // Open the table Table *T = OpenOrDie("/bigtable/users"); // Update name and delete a phone RowMutation r1(T, “29"); r1.Set(“name:", “Robert"); r1.Delete(“contactInfo:phone"); Operation op; Apply(&op, &r1);

- 43. BigTable – Building Blocks • GFS – large-scale distributed file system. GHEMAWAT, GOBIOFF, LEUNG, The Google file system. (Dec. 2003) • Chubby – distributed lock service. BURROWS, The Chubby lock service for loosely coupled distributed systems (Nov. 2006) • SSTable – file format to store BigTable data.

- 44. BigTable – Building Blocks • GFS : – Files broken into chunks (typically 64 MB) – Master manages metadata. – Data transfers happens directly between clients/chunkservers. Master Client

- 45. BigTable – Building Blocks • Chubby : – Provides a namespace of directories and small files : • Each directory or file can be used as a lock. • Reads and writes to a file are atomic. – Chubby clients maintain sessions with Chubby : • When a client's session expires, it loses any locks. – Highly available : • 5 active replicas, one of which is elected to be the master. • Live when a majority of the replicas are running and can communicate with each other.

- 46. BigTable – Building Blocks • SSTable : – Stored in GFS. – Persistent, ordered, immutable map from keys to values. – Provided operations : • Get value by key • Iterate over all key/value pairs in a specified key range.

- 47. BigTable – System Structure • Three major components: – Client library – Master (exactly one) : • • • • • Assigning tablets to tablet servers. Detecting the addition and expiration of tablet servers. Balancing tablet-server load. Garbage collection of files in GFS. Schema changes such as table and column family creations. – Tablet Servers(multiple, dynamically added) : • Manages 10-100 tablets • Handles read and write requests to the tablets. • Splits tablets that have grown too large.

- 48. BigTable – System Structure

- 49. BigTable – Tablet Location • Three-level hierarchy analogous to that of a B+ tree to store tablet location information. • Client library caches tablet locations.

- 50. BigTable – Tablet Assignment • Tablet Server: – When a tablet server starts, it creates, and acquires an exclusive lock on a uniquelynamed file in a specific Chubby directory - servers directory. • Master : – Grabs a unique master lock in Chubby, which prevents concurrent master instantiations. – Scans the servers directory in Chubby to find the live servers. – Communicates with every live tablet server to discover what tablets are already assigned to each server. – If a tablet server reports that it has lost its lock or if the master was unable to reach a server during its last several attempts – deletes the lock file and reassigns tablets. – Scans the METADATA table to find unassigned tablets and reassigns them.

- 51. BigTable - Tablet Assignment Master Metadata Table Tablet Server 1 Tablet Server 2 Tablet 1 Tablet 8 Tablet 7 Tablet 2 Chubby

- 52. BigTable – Tablet Serving • Writes : – Updates committed to a commit log. – Recently committed updates are stored in memory – memtable. – Older updates are stored in a sequence of SSTables. • Reads : – Read operation is executed on a merged view of the sequence of SSTables and the memtable. – Since the SSTables and the memtable are sorted, the merged view can be formed efficiently.

- 53. BigTable - Compactions • Minor compaction: – Converts the memtable into SSTable. – Reduces memory usage. – Reduces log reads during recovery. • Merging compaction: – Merges the memtable and a few SSTable. – Reduces the number of SSTables. • Major compaction: – Merging compaction that results in a single SSTable. – No deletion records, only live data. – Good place to apply policy “keep only N versions”

- 54. BigTable – Bloom Filters Bloom Filter : 1. Empty array a of m bits, all set to 0. 2. Hash function h, such that h hashes each element to one of the m array positions with a uniform random distribution. 3. To add element e – a[h(e)] = 1 Example : S1 = {“John Smith”, ”Lisa Smith”, ”Sam Doe”, ”Sandra Dee”} 0 1 1 0 1 0 0 0 0 0 0 0 0 0 1 0

- 55. BigTable – Bloom Filters • Drastically reduces the number of disk seeks required for read operations !

- 56. Overview • Introduction to DDBS and NoSQL √ • CAP Theorem √ • Dynamo (AP) √ • BigTable (CP) √ • Dynamo vs. BigTable

- 57. Dynamo vs. BigTable Dynamo BigTable data model key-value multidimensional map operations by key by key range partition random ordered replication sloppy quorum only in GFS architecture decentralized hierarchical consistency eventual strong (*) access control no column family

- 58. Overview • Introduction to DDBS and NoSQL √ • CAP Theorem √ • Dynamo (AP) √ • BigTable (CP) √ • Dynamo vs. BigTable √

- 59. References • R.Ramakrishnan and J.Gehrke, Database Management Systems, 3rd edition, pp. 736-751 • S. Gilbert and N. Lynch, "Brewer's Conjecture and the Feasibility of Consistent, Available, Partition-Tolerant Web Services," ACM SIGACT News, June 2002, pp. 51-59. • Brewer, E. CAP twelve years later: How the 'rules' have changed. IEEE Computer 45, 2 (Feb. 2012), 23–29. • S. Gilbert and N. Lynch, Perspectives on the CAP Theorem. IEEE Computer 45, 2 (Feb. 2012), 30-36. • G. DeCandia et al., "Dynamo: Amazon's Highly Available Key-Value Store," Proc. 21st ACM SIGOPS Symp. Operating Systems Principles (SOSP 07), ACM, 2007, pp. 205-220. • F. Chang et al., "Bigtable: A Distributed Storage System for Structured Data" Proc. 7th Usenix Symp. Operating Systems Design and Implementation (OSDI 06), Usenix, 2006, pp. 205-218. • Ghemawat, S., Gobioff, H., and Leung, S. The Google File system. In Proc. of the 19th ACM SOSP (Dec.2003), pp. 29.43.

Editor's Notes

- Immutable On reads, no concurrency control neededIndex is of block ranges, not values