Introduction to Recommendation System

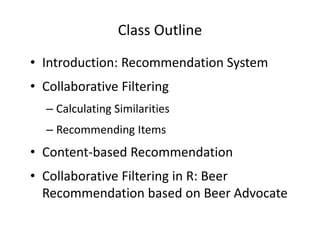

- 1. Class Outline • Introduction: Recommendation System • Collaborative Filtering – Calculating Similarities – Recommending Items • Content-based Recommendation • Collaborative Filtering in R: Beer Recommendation based on Beer Advocate

- 2. Recommendation System Demo http://blog.yhathq.com/posts/recommender-system-in-r.html

- 3. Examples • Retail: Amazon • Movie: Netflix • Friends: Facebook • Professional connection: LinkedIn • Websites: Reddit

- 4. Key Ideas • Intuition: Low tech way to get recommendation - ask your friends! – Some of your friends have better “taste” than others (likely-minded) • Problem: Not scalable – As more and more options become available, it become less practical to decide what you want by asking a small group of people – They may not be aware of all the options • Solution: Collaborative filtering or KNN – Search a large group of people and find a smaller set with tastes similar to yours – Looks at other things they like an combines them to create a ranked list of suggestions – First used by David Goldberg (Xerox PARC, 1992): “Using collaborative filtering to weave an information tapestry.”

- 5. Input Data • Explicit (Questioning) – Explicit rating (1 -5 numerical ratings) – Favorites (Likes): 1 (liked), 0 (No vote), -1 (disliked) • Implicit (Behavioral) – Purchase: 1 (bought), 0 (didn’t buy) – Clicks: 1 (clicked), 0 (didn’t click) – Reads: 1 (read), 0 (didn’t read) – Watching a Video: 1 (watched), 0 (didn’t watch) – Hybrid: 2 (bought), 1 (browsed), 0 (didn’t buy)

- 6. Recommendation vs. Prediction • Recommendations – Suggestions – Top-N • Predictions – Ratings – Purchase

- 7. Preference Data: Structure • Rows: Customers/Users • Columns: Items Customer ID Lady in the Water • Large matrix Y(u,i) Snake on a Plane Just My Luck Superman Returns – Many zeros (Sparse) – Number of users: large (order of million) – Number of observations per customer: large (200 +) – Time/sequence information ignored You, Me, and Dupree The Night Listener Michael 2.5 3.5 3.0 3.5 2.5 3.0 Jay 3.5 3.5 3.0 July 3.5 3.0 4.0 2.5 4.5 Peter 3.0 4.0 2.0 3.0 3.0 Stephen 3.0 4.0 5.0 3.0

- 8. Collaborative Filtering Tasks • 1. Finding Similar Users: Calculating Similarities • 2. Ranking the Users • 3. Recommending Items based on weighted preference data

- 9. Finding Similar Users • Calculate pair-wise similarities – Euclidean Distance: Simple, but subject to rating inflation – Cosine similarity: better with binary/fractional data – Pearson correlation: continuous variables (e.g. numerical ratings) – Others: Jaccard coefficient, Manhattan distance

- 10. Ranking the Users • Focal customer – Toby: Preference Vector (“Snakes on a Plan”: 4.5, “You, Me, and Dupree: 1.0”, “Superman Returns”: 4.0) Customer ID Pearson Correlation Similarity Michael 0.99 Jay 0.38 July 0.89 Peter 0.92 Stephen 0.66 – Top 3 matches: Michael, Peter, July -> Likely-minded!

- 11. Recommending Items – 1/2 • Problems: If we only use top 1 likely-minded customer – May accidently turn up customers who haven’t reviewed some of the movies that I might like – Could return a customer who strangely liked a move that got bad reviews from all other customers • Solution: Score the items by producing a weighted score that ranks the customers (weights by similarity)

- 12. Recommending Items – 2/2 Customer ID Similarity Lady in the Water Snake on a Plane Just My Luck Superm an Returns You, Me, and Dupree The Night Listener Michael 0.99 2.5 3.5 3.0 3.5 2.5 3.0 Jay 0.38 3.5 3.5 3.0 July 0.89 3.5 3.0 4.0 2.5 4.5 Peter 0.92 3.0 4.0 2.0 3.0 3.0 Stephen 0.66 3.0 4.0 5.0 3.0 Total 8.5 18.5 8 15.5 8.5 16.5 Sim. Sum 2.57 3.84 2.8 3.46 2.26 3.84 Total/Sim. 3.31 4.82 2.86 4.48 3.76 4.30 Sum 1 2 3

- 13. Problems with Collaborative Filtering • When data are sparse, correlations (weights) are based on very few common items -> unreliable • It cannot handle new items • It do not incorporate attribute information • Alternative way: content-based recommendations – Let’s use attribute information!

- 14. Content-based Recommendations • 1. Defined features and feature values (similar to conjoint analysis) • 2. Describe each item as a vector of features • 3. Develop a user profile: the types of items this user likes – A weighted vector of item attributes – Weights denote the importance of each attribute to the user • 4. Recommend items that are similar to those that a user liked in the past • Note 1: Similar to information retrieval (text mining) • Note 2: Pre-computation possible; More scalable -> Used by Amazon

- 15. More ideas for improvement • Ensemble methods (combining algorithms) – Most advanced/commercial algorithms combine kNN, matrix factorization (handling large/sparse matrix), and other classifiers • Marginal propensity to buy with/without recommendation (instead of probability of buy) – Anand Bodapati (JMR 2008): “Customers who buy this product buy these other products” kind of recommendation system frequently recommends what customers would have bought anyway and the recommendation system often creates only purchase acceleration rather than expand sales • Incorporate text reviews: text review data can be used as a basis to calculate similarities (i.e. text mining) – Basic methods only rely on numerical ratings/purchase data

- 16. • Collaborative Filtering in R: Beer Recommender from “Beer Advocate” Data

- 17. Beer Advocate Data • Each Record: a beer’s name, brewery, metadata (style, ABV), (numerical) ratings (1-5), short text reviews (250 – 5000 characters) • ~1.5 millions reviews posted on Beer Advocate from 1999 to 2011.

- 18. Collaborative Filtering in R – 1/2 Step 0) Install “R” and Packages R program: http://www.r-project.org/ Package: http://cran.r-project.org/web/packages/tm/index.html Package: http://cran.r-project.org/web/packages/twitteR/index.html Package: http://cran.r-project.org/web/packages/wordcloud/index.html Manual: http://cran.r-project.org/web/packages/tm/vignettes/tm.pdf Step 1) Collecting preference data (ratings) from “Beer Advocate” : Web crawling

- 19. Recommendation System in R – 2/2 Step 2) Cleaning/Formatting Data (Python) Step 3) Importing Data into R Step 4) Finding Similar Users: Calculating Similarities Step 5) Ranking the Users Step 6) Recommending Items

- 20. Books • Programming Collective Intelligence (Toby Segaran) • Machine Learning for Hackers (Drew Conway and John Myles White) Articles (more technical) • Internet Recommendation Systems (Asim Ansari, Skander Essegaier, Rajeev Kohli) • Recommendation Systems with Purchase Data (Anand Bodapati) Reference