STPCon Fall 2012

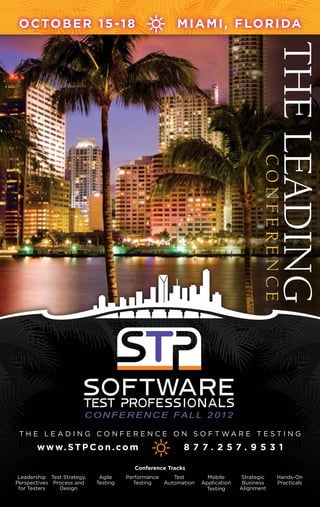

- 1. O cto b e r 1 5 - 1 8 M i a m i , f lo r i da The Leading Conference C O N F E R E N C E F A L L 2 01 2 T h e L e ad i n g C o n f e r e n c e o n so f t w a r e t e st i n g w w w. S T P C o n . c o m 8 7 7. 2 57. 9 5 3 1 Conference Tracks Leadership Test Strategy, Agile Performance Test Mobile Strategic Hands-On Perspectives Process and Testing Testing Automation Application Business Practicals for Testers Design Testing Alignment

- 2. C O N F E R E N C E & E X P O 2 0 12 Coast to Coast Registration Conference Packages and Pricing Main Conference Package I nformation Dates: Wednesday, October 16 – Friday, October 18 Price: 1,295.00 on or before September 7 OR $ Discounts $1,695.00 after September 7 Early Bird Discount: Register on or before September 7 4 n Exceptional Keynotes to receive $400.00 off any full conference package n 0 5 Conference Breakout Sessions in 8 Comprehensive Tracks Team Discounts n omprehensive Online Web Access to All C Number of Rate Before Rate After Conference Sessions and Keynotes Attendees Early Bird (9/7) Early Bird (9/7) n etworking Activities Including: Speed Geeking N 1-2 $1,295 $1,695 Sessions; Wrap-Up Roundtables and Sponsored Topic Roundtable Discussions 3-5 $1,195 $1,395 n op Industry Vendors and Participation in T 6-9 $1,095 $1,295 the Sponsor Prize Giveaway n hree Breakfasts, Two Lunches and T 10-14 $995 $1,195 Happy Hour Welcome Reception 15+ $895 $1,095 *Price above is for access to the Main Conference Package. Main Conference Package PLUS 1-Day Please add $400 to your registration if you are registering for Dates: Tuesday, October 15 – Friday, October 18 the Main Conference Package PLUS 1-Day. Price: 1,695.00 on or before September 7 OR $ **Team discounts are not combinable with any other discounts/offers. $2,095.00 after September 7 Teams must be from the same company and should be submitted into the online registration system on the same day. n ncludes I ALL of the Main Conference Package Offerings n ncludes choice of a 1-day pre-conference workshop I n ncludes a pre-conference breakfast and lunch I register www.STPCon. com or call 877.257.9531 Page 2

- 3. Coast to Coast Conferences Package (For 6 or More Registrations Only – Book Now and Choose Between 2 Conferences) Dates: Software Test Professionals Conference Fall 2012 (October 16-18, 2012) AND Software Test Professionals Conference Spring 2013 (April 22-24, 2013) Price: Take advantage of the team discounts listed below – but choose which conference each team member should attend. Register your entire team at the discounted rates listed on the Team Discount Chart below – and then decide which conference they should attend: n oftware S Test Professionals Conference Fall 2012 in Miami, FL on October 16-18, 2012 OR Table of n oftware S Test Professionals Conference Spring in San Diego, CA on April 22-24, 2013 Contents Conference Hotel Registration...........................................2-3 Keynote Presentations...........................4-5 Schedule at a Glance.............................6-7 Conference Tracks................................8-9 . Pre-Conference Workshops...............10-11 Session Block 1.................................12-13 Session Block 2.................................14-15 Session Block 3.................................16-17 Session Block 4.................................18-19 Session Block 5.................................20-21 Hilton Miami Downtown Session Block 6.................................22-23 1601 Biscayne Boulevard | Miami, FL 33132 Session Block 7.................................24-25 A discounted conference rate of $129.00 is available for conference Session Block 8.................................26-27 attendees. Call 1-800-HILTONS and reference GROUP CODE: Session Block 9.................................28-29 STP2 / Software Test Professionals Conference rate or visit the event website at www.stpcon.com/hotel to reserve your room Session Block 10...............................30-31 online. Rooms are available until September 25 or until they sell out. Don’t miss your opportunity to stay at the conference hotel. Cancellation Policy: All cancellations must be made Platinum Sponsor in writing. You may cancel without penalty until September 14, 2012 after which a $150 cancellation fee will be charged. No-shows and cancellations after September 28, 2012 will be charged the full conference rate. Cancellation policies apply to both conference and pre-conference workshop registrations. Page 3

- 4. 9 :90: 0 a 3 0 m m– a – 1 0 : 1: 5 a ma 1 0 3 0 m k e y not e s Tuesday, 16 October LEE Henson nosneH EEL Chief Agile Enthusiast n AgileDad Testing Agility Leads to Business Fragility It comes as no surprise that with the downfall of the economy in the US, many organizations have made testing an afterthought. Some have scaled testing efforts back so far that the end product has suffered more greatly than one can imagine. This is resulting in major consumer frustration and a great lack of organizational understanding of how best to tackle this issue. Agile does not mean do more with less. As we journey into a new frontier where smaller is better, less is more, and faster is always the right answer, traditional testing efforts have been morphed and picked apart to only include the parts that people want to see and hear. Effective testing can be done in an efficient manner without sacrificing quality at the end of the day. Learn based on real world scenarios how others have learned about and conquered this issue. Embark on a journey with ‘Ely Executive’ as he realizes the value and importance of quality to both his internal and external customer. Discover how a host of internal and external players help Ely reach his conclusion and bask in the ‘I can relate to that’ syndrome this scenario presents. This epic adventure will surely prove memorable and will be one you do not want to miss! Lee Henson is currently one of just over 100 Certified Scrum Trainers (CST) worldwide. He is also a Project Management Professional (PMP) and a PMI-Agile Certified Practitioner and has worked as a GUI web developer, quality assurance analyst, automated test engineer, QA Manager, product manager, project manager, ScrumMaster, agile coach, consultant, training professional. Lee is a graduate of the Disney Management Institute and is the author of the Definitive Agile Checklist. He publishes the Agile Mentor Newsletter. Wednesday, 17 October jeff Havens snevaH ffej Speaker n Trainer n Author Uncrapify Your Life! This award-winning keynote is a study in exactly what not to do. Promising to give his audiences permission rather than advice, Jeff will ‘encourage’ your team to criticize others and outsource blame before bringing it all home with a serious discussion about proper communication, customer service, and accountability practices. Tired of being told what to do. Sick of attending sessions that tell you how to become a better communicator or a more effective leader, and you definitely don’t want to hear any more garbage about effective change management. Experience something different. You won’t be told what to do. Instead, you will be given permission to do all of the things you’ve always wanted to do – to become the worst person you can possibly be – to be sure that you are conveniently left off of company emails inviting you to social functions. And if you’re not careful, you might actually learn something. Session Takeaways: n How to avoid negative and unproductive conversations n The power of sincere, straightforward communication n How to approach change in order to achieve seamless integration A Phi Beta Kappa graduate of Vanderbilt University, Jeff Haven began his career as a high school English teacher before branching into the world of stand-up comedy, where he worked with some of the brightest lights in American comedy and honed the art of engaging audiences through laughter. But his impulse to teach never faded, and soon he began looking for an avenue to combine both of his passions into entertaining and meaningful presentations. Page 4

- 5. C O N F E R E N C E F A L L 2 01 2 Wednesday, 17 October (This keynote will be offered immediately following lunch from 2:00pm – 2:45pm) Matt Johnston notsnhoJ ttaM CMO n uTest Mobile Market Metrics: Breaking Through the Hype Serving consumers’ voracious appetite for smartphones, tablets, e-readers, gaming consoles, connect TVs and apps – advancements in mobile technology are happening at warp speed. Manufacturers, carriers and app makers have all their chips on the table, launching dozens of unique devices per year, releasing new and improved operating systems, and aligning behind a multitude of browsers and OS standards. It’s a dizzying task for test and engineering professionals to keep up with all the changes, let alone figure out which ones need to be supported in their industry, in their company, and in their department. Yet, despite all the fragmentation in today’s mobile universe, tech professionals have to make difficult choices. Daunting? Yes. But there’s something better than tea leaves and a crystal ball to take some of the “guess” out of our guesstimations: mobile market trends and statistics. We’ll look at a wide variety of mobile metrics that cut through the hype, comparing the growing (and waning) popularity of different devices, operating systems and related tech that may influence attendees’ testing and development decisions. Rounding out the discussion, we’ll end with forward-looking insights into the most promising, emerging technologies. Matt Johnston leads uTest’s marketing and community efforts as CMO, with more than a decade of marketing experience at companies ranging from early-stage startups to publicly traded enterprises. He continues to lead uTest’s efforts in shaping the brand, building awareness, generating leads and creating a world-class community of testers. Matt earned a B.A. in Marketing from Calvin College, as well as an MBA in Marketing Technology from New York University’s Stern School of Business. Thursday, 18 October (9:00am – 10:00am) Karen N. Johnson nosnhoJ .N neraK Founder n Software Test Management, Inc. The Discipline Aspect of Software Testing Your mission is to regression test a website for the umpteenth time, preferably with fresh eyes and a thorough review. After all, you’re the one holding up this software from being used by paying customers. The pressure mounts and yet procrastination takes hold. It’s time, time to roll up your sleeves and finish the work at hand and yet, you just don’t feel like it. How do you discipline yourself to get the job done? Discipline. Focus. How do you pull on the reservoirs of these necessary skills? How do we invoke discipline to get the job done? To begin with, you have to admit you have a challenge to overcome and in this presentation, the reality of needing to be disciplined and focused to get work done, most especially getting work done under pressure, will be discussed. Tactics will be shared for getting through stacks of work when you don’t feel inspired. We will look at how to build rigor and discipline into your practice in software testing. Software testing takes a certain amount of discipline and rigor; it takes the ability to focus and think while frequently under stressful conditions. This presentation will provide an honest look at (as well as practical tips) how to build rigor and discipline into your practice in software testing. Karen N. Johnson is an independent software test consultant. She is a frequent speaker at conferences. Karen is a contributing author to the book, Beautiful Testing released by O’Reilly publishers. She is the co-founder of the WREST workshop, more information on WREST can be found at: http://www.wrestworkshop.com/Home.html. She has published numerous articles and blogs about her experiences with software testing. You can visit her website at: http://www.karennjohnson.com. Page 5

- 6. O c t o b e r 1 5 –1 8 , 2 0 1 2 Schedule at a glance Monday, October 15, 2012 8:00am - 4:00pm Registration Information 8:00am - 9:00am Continental Breakfast Networking 9:00am - 4:00pm Pre-Conference Workshops Pre-1: Mobile Test Automation (Brad Johnson, Fred Beringer) Pre-2: Testing Metrics: Process, Project, and Product (Rex Black) Pre-3: New World Performance (Mark Tomlinson) Pre-4: Hands-on: Remote Testing for Common Web Application Security Threats (David Rhoades) 10:30am - 11:00am Morning Beverage Break 12:30pm - 1:30pm Lunch 3:00pm - 3:30pm Afternoon Beverage Break Tuesday, October 16, 2012 8:00am - 8:00pm Registration Information 8:00am - 9:00am Breakfast 9:00am - 10:15am General Session: Testing Agility Leads to Business Fragility (Lee Henson) 10:30am - 11:45am Session Block 1 101: Preparing the QA Budget, Effort Test Activities – Part 1(Paul Fratellone) 102: Testing with Chaos (James Sivak) 103: A to Z Testing in Production: Industry Leading Techniques to Leverage Big Data for Quality (Seth Elliott) 104: Mobile Test Automation for Enterprise Business Applications (Sreekanth Singaraju) 105: Test Like a Ninja: Hands-On Quick Attacks (Andy Tinkham) 11:45am - 12:00pm Morning Beverage Break 12:00pm - 1:15pm Session Block 2 201: Preparing the QA Budget, Effort Test Activities – Part 2 (Paul Fratellone) 202: Software Reliability, the Definitive Measure of Quality (Lia Johnson) 203: CSI: Miami – Solving Application Performance Whodunits (Kerry Field) 204: Building a Solid Foundation for Agile Testing (Robert Walsh) 205: Complement Virtual Test Labs with Service Virtualization (Wayne Ariola) 1:15pm - 2:30pm Lunch Speed Geeking 2:30pm - 3:45pm Session Block 3 301: Leading Cultural Change in a Community of Testers (Keith Klain) 302: Software Testing Heuristics Mnemonics (Karen Johnson) 303: Interpreting and Reporting Performance Test Results – Part 1(Dan Downing) 304: Finding the Sweet Spot – Mobile Device Testing Diversity (Sherri Sobanski) 305: The Trouble with Troubleshooting (Brian Gerhardt) 4:00pm - 4:30pm Tools and Trends Showcase 4:30pm - 4:45pm Afternoon Beverage Break 4:45pm - 6:00pm Session Block 4 401: Don’t Ignore the Man Behind the Curtain (Bradley Baird) 402: Optimizing Modular Test Automation (David Dang) 403: Interpreting and Reporting Performance Test Results – Part 2 (Dan Downing) 404: Agile vs. Fragile: A Disciplined Approach or an Excuse for Chaos (Brian Copeland) 405: Mobile Software Testing Experience (Todd Schultz) 6:00pm - 8:00pm Exhibitor Hours 6:00pm - 8:00pm Happy Hour Welcome Reception register www.STPCon. com or call 877.257.9531 Page 6

- 7. C O N F E R E N C E F A L L 2 0 12 Wednesday, October 17, 2012 8:00am - 6:00pm Registration Information 8:00am - 9:00am Breakfast Sponsor Roundtables 9:00am - 10:15am General Session Sponsor Prize Giveaway: Uncrapify Your Life (Jeff Havens) 10:30am - 11:45am Session Block 5 501: Building A Successful Test Center of Excellence – Part 1 (Mike Lyles) 502: How to Prevent Defects (Dwight Lamppert) 503: The Testing Renaissance Has Arrived – On an iPad in the Cloud (Brad Johnson) 504: Non-Regression Test Automation – Part 1 (Doug Hoffman) 505: Slow-Motion Performance Analysis (Mark Tomlinson) 11:45am - 12:00pm Morning Beverage Break 12:00pm - 1:15pm Session Block 6 601: Building A Successful Test Center of Excellence – Part 2 (Mike Lyles) 602: Aetna Case Study – Model Office (Fariba Marvasti) 603: Application Performance Test Planning Best Practices (Scott Moore) 604: Non-Regression Test Automation – Part 2 (Doug Hoffman) 605: Keeping Up! (Robert Walsh) 1:15pm - 2:45pm Lunch 2:00pm - 2:45pm General Session: Mobile Market Metrics: Breaking Through The Hype (Matt Johnston) 3:00pm - 4:15pm Session Block 7 701: Technical Debt: A Treasure to Discover and Destroy (Lee Henson) 702: Dependencies Gone Wild: Testing Composite Applications (Wayne Ariola) 703: Top 3 Performance Land Mines and How to Address Them (Andreas Grabner) 704: Building Automation From the Bottom Up, Not the Top Down (Jamie Condit) 705: There Can Only Be One: A Testing Competition – Part 1 (Matt Heusser) 4:15pm - 4:30pm Afternoon Beverage Break 4:30pm - 5:45pm Session Block 8 801: Maintaining Quality in a Period of Explosive Growth – A Case Study (Todd Schultz) 802: Testing in the World of Kanban – The Evolution (Carl Shaulis) 803: Refocusing Testing Strategy Within the Context of Product Maturity (Anna Royzman) 804: Mobile Testing: Tools, Techniques Target Devices (Uday Thongai) 805: There Can Only Be One: A Testing Competition – Part 2 (Matt Heusser) Thursday, October 18, 2012 8:00am - 1:00pm Registration Information 8:00am - 9:00am Breakfast Wrap Up Roundtables 9:00am - 10:00am General Session: The Discipline Aspect of Software Testing (Karen N. Johnson) 10:15am - 11:30am Session Block 9 901: 7 Habits of Highly Effective Testers (Rakesh Ranjan) 902: Advances in Software Testing – A Panel Discussion (Matt Heusser) 903: Performance Testing Metrics and Measures (Mark Tomlinson) 904: How and Where to Invest Your Testing Automation Budget (Sreekanth Singaraju) 905: Memory, Power and Bandwidth – oh My! Mobile Testing Beyond the GUI (JeanAnn Harrison) 11:30am - 11:45am Morning Beverage Break 11:45am - 1:00pm Session Block 10 1001: Redefining the Purpose of Software Testing (Joseph Ours) 1002: Evaluating and Improving Usability (Philip Lew) 1003: Real World Performance Testing in Production (Dan Bartow) 1004: Scaling Gracefully and Testing Responsively (Richard Kriheli) 1005: Quick, Easy Useful Performance Testing: No Tools Required (Scott Barber) Page 7

- 8. Advanced Education Conference tracks Leadership Perspectives for Testers Understanding the business side of testing is as important as amplifying our approaches and techniques. In this track you will learn how to build a testing budget, effectively manage test teams, communicate with stakeholders, and advocate for testing. 101: Preparing the QA Budget, Effort Test Activities – Part 1 201: Preparing the QA Budget, Effort Test Activities – Part 2 401: Don’t Ignore the Man Behind the Curtain 701: Technical Debt: A Treasure to Discover and Destroy 801: Maintaining Quality in a Period of Explosive Growth – A Case Study 901: 7 Habits of Highly Effective Testers 1001: Redefining the Purpose of Software Testing Strategic Business Alignment The best way to ensure that the goals of your project and the organization are met at the end of production, is to make sure they are aligned from the beginning. This will require an ability to effectively lead diverse teams and gain buy-in and agreement throughout the life of the project. This track will offer sessions based on real life experiences and case studies where true business alignment was achieved resulting in a successful outcome. 202: Software Reliability, The Definitive Measure of Quality 301: Leading Cultural Change in a Community of Testers 501: Building A Successful Test Center of Excellence – Part 1 601: Building a Successful Test Center of Excellence – Part 2 Test Strategy, Process and Design Before you begin testing on a project, your team should have a formal or informal test strategy. There are key elements you need to consider when formulating your test strategy. If not, you may be wasting valuable time, money and resources. In this track you will learn the strategic and practical approaches to software testing and test case design, based on the underlying software development methodology. 102: Testing with Chaos 302: Software Testing Heuristics Mnemonics 502: How to Prevent Defects 602: Aetna Case Study – Model Office 702: Dependencies Gone Wild: Testing Composite Applications 802: Testing in the World of Kanban – The Evolution 902: Advances in Software Testing – A Panel Discussion 1002: Evaluating and Improving Usability Performance Testing Performance Testing is about collecting data on how applications perform to assist the development team and the stakeholders make technical and business decisions related to performance risks. In this track you will learn practical skills, tools, and techniques for planning and executing effective performance tests. This track will include topics such as: performance testing virtualized systems, performance anti-patterns, how to quantify performance testing risk, all illustrated with practitioners’ actual experiences doing performance testing. 103: A to Z Testing in Production: Industry Leading Techniques to Leverage Big Data for Quality 203: CSI: Miami – Solving Application Performance Whodunits 303: Interpreting and Reporting Performance Test Results – Part 1 403: Interpreting and Reporting Performance Test Results – Part 2 603: Application Performance Test Planning Best Practices 703: Top 3 Performance Land Mines and How to Address Them 903: Performance Testing Metrics and Measures 1003: Real World Performance Testing in Production Page 8

- 9. C O N F E R E N C E F A L L 2 0 12 Test Automation Which tests can be automated? What tools and methodology can be used for automating functionality verification? Chances are these are some of the questions you are currently facing from your project manager. In this track you will learn how to implement an automation framework and how to organize test scripts for maintenance and reusability, as well as take away tips on how to make your automation framework more efficient. 205: Complement Virtual Test Labs with Service Virtualization 402: Optimizing Modular Test Automation 504: Non-Regression Test Automation – Part 1 604: Non-Regression Test Automation – Part 2 704: Building Automation From the Bottom Up, Not the Top Down 904: How and Where to Invest Your Testing Automation Budget Agile Testing The Manifesto for Agile Software Development was signed over a decade ago. The Agile framework’s focus on agility is anything but undisciplined. This track will help participants understand how they can fit traditional test practices into an Agile environment as well as explore real-world examples of testing projects and teams in varying degrees of Agile adoption. 204: Building a Solid Foundation For Agile Testing 404: Agile vs Fragile: A Disciplined Approach or an Excuse for Chaos 605: Keeping Up! 803: Refocusing Testing Strategy Within the Context of Product Maturity Mobile Application Testing The rapid expansion of mobile device software is altering the way we exchange information and do business. These days smartphones have been integrated into a growing number of business processes. Developing and testing software for mobile devices presents its own set of challenges. In this track participants will learn mobile testing techniques from real-world experiences as presented by a selection of industry experts. 104: Mobile Test Automation for Enterprise Business Applications 304: Finding the Sweet Spot – Mobile Device Testing Diversity 503: The Testing Renaissance Has Arrived (on an iPad in the Cloud) 804: Mobile Testing: Tools, Techniques Target Devices 1004: Scaling Gracefully and Testing Responsively Hands-On Practicals This track is uniquely designed to combine the best software testing theories with real-world techniques. Participants will actually learn by doing in this hands-on format simulating realistic test environments. After all, applying techniques learned in a classroom to your individual needs and requirements can be challenging. Seven technical sessions covering a wide range of testing topics will be presented. Participants must bring a laptop computer and power cord. 105: Test Like a Ninja: Hands-On Quick Attacks 305: The Trouble with Troubleshooting 405: Mobile Software Testing Experience 505: Slow-Motion Performance Analysis 705: There Can Be Only One: A Testing Competition (Part 1) 805: There Can Be Only One: A Testing Compettition (Part 2) 905: Memory, Power and Bandwidth – Oh My! Mobile Testing Beyond the GUI Page 9

- 10. P r e - C o n f e r e n c e workshops Monday Pre-2 Rex Black, President, RBCS 15 October Testing Metrics: Process, Project, and Product 9 : 0 0 a m – 4 : 0 0 p m Some of our favorite engagements involve helping clients implement metrics programs for testing. Facts and measures are the foundation of true understanding, but misuse of Pre-1 Fred Beringer, VP, Product Management, SOASTA metrics is the cause of much confusion. How can we use metrics to manage testing? What metrics can we use to Brad Johnson, VP Product and Channel Marketing, SOASTA measure the test process? What metrics can we use to measure our progress in testing a project? What do metrics Mobile Test Automation tell us about the quality of the product? In this workhop, Rex will share some things he’s learned about metrics that you In this full-day workshop, you will leave with the skills can put to work right away, and you’ll work on some practical and tools to begin implementing your mobile functional exercises to develop metrics for your testing. test automation strategy. SOASTA’s Fred Beringer and Brad Johnson will walk you through the basics: Workshop Outline n reating C your plan of “What to Test” 1. Introduction 4. Project Metrics n nabling n resentation P E a mobile app to be testable 2. The How and Why of Metrics n apturing your first test cases n ase study C C n resentation P n etting validations and error conditions n xercise E S n xercise E n unning and executing mobile functional test cases R 5. Product Metrics n un and Games: Mobile Test Automation Hackathon! 3. Process Metrics F n resentation P n resentation P n ase study C Attendees will be provided free access and credentials to n ase study C n xercise E the CloudTest platform for this session. Tests will be built n xercise E and executed using attendees’ own IOS or Android mobile 6. Conclusion devices, so come with your smartphones charged and ready to become part of the STPCon Fall Mobile Device Test Cloud! Learning Objectives n nderstand U the relationship between objectives Brad Johnson joined the front lines of and metrics the Testing Renaissance in 2009 when n or a given objective, create one or more metrics F he signed on with SOASTA to deliver and set goals for those metrics testing on the CloudTest platform to n nderstand the use of metrics for process, project, U a skeptical and established software and product measurement testing market. n reate metrics to measure effectiveness, efficiency, C Fred Beringer has 15 years of and stakeholder satisfaction for a test process n reate metrics to measure effectiveness, efficiency, C software development and testing experience managing and stakeholder satisfaction for a test project n reate metrics to measure effectiveness, efficiency, C large organizations where he was responsible for developing software and stakeholder satisfaction for a product being tested and applications for customers. Rex Black is a prolific author, practicing in the field of software testing today. His first book, Managing the Testing Process, has sold over 50,000 copies. register www.STPCon. com or call 877.257.9531 Page 10

- 11. C O N F E R E N C E F A L L 2 01 2 Pre-3 Mark Tomlinson, President, West Evergreen Consulting, LLC Pre-4 David Rhoades,Consulting, Inc. Maven Security Senior Consultant, New World Performance Hands-on: Remote Testing It’s always a significant challenge performance testers for Common Web Application and engineers to keep up with the breadth of hot new Security Threats technologies and innovations coming at a ferocious pace The proliferation of web-based applications has increased in our industry. Not only has the technology and system the enterprise’s exposure to a variety of threats. There are landscape changed in the last few years, but the methods overarching steps that can and should be taken at various and techniques for performance testing are also updating steps in the application’s lifecycle to prevent or mitigate rapidly. Our responsibility as performance testers and these threats, such as implementing secure design and engineers is to learn and master these new approaches to coding practices, performing source code audits, and performance testing, optimization and management and maintaining proper audit trails to detect unauthorized use. serve our organizations with the most valuable knowledge and skill possible. In my travels I’ve often heard questions This workshop, through hands-on labs and demonstrations, from fellow performance testers: Out of all the new will introduce the student to the tools and techniques technologies out there, what should I be learning? What needed to remotely detect and validate the presence of new tools for performance are out there? How do I better common insecurity for web-based applications. Testing fit in with agile development and operations? How do I will be conducted from the perspective of the end user (as updated and refresh my skills to keep current and valuable opposed to a source code audit). Security testing helps to to my company? Spend a day with Mark and you will find fulfill industry best practices and validate implementation. answers to these questions. Security testing is especially useful since it can be done at various phases within the application’s lifecycle (e.g. Mark’s workshop will help you to be better prepared to during development), or when source code is not available chart your own course for the future of performance and for review. your career. Mark will share with you the most recent developments in performance testing tools, approaches This workshop will focus on the most popular and critical and techniques; how to best consider your current and threats facing web applications, such as cross-site future strategy around performance. scripting (XSS) and SQL injection, based on the industry standard OWASP “Top Ten.” The foundation learned in this Workshop Outline class will enable the student to go beyond the top ten via n ntroduction I to the New World of Performance self-directed learning using other industry resources, such n ew Approaches N and Methods as the OWASP Testing Guide. n ew Performance Roles N n ew Skills and Techniques N David Rhoades is a senior consultant n ew Performance Tools N with Maven Security Consulting Inc. which n onclusion C provides information security assessments and training services to a global clientele. Mark Tomlinson is a software tester and His expertise includes web application test engineer. His first test project in 1992 security, network security architectures, sought to prevent trains from running into and vulnerability assessments. each other – and Mark has metaphorically been preventing “train wrecks” for his customers for the past 20 years. Page 11

- 12. 1 0 : 3 0 a m – 1 1 : 4 5 a m Session block 1 Tuesday Seth Eliot is Senior Knowledge Engineer for Microsoft Test Excellence 16 October focusing on driving best practices for services and cloud development and testing across the company. Paul Fratellone, a 25 year career veteran in quality and testing, has 101 TestFratellone, Program Director Quality Paul Consulting, MindTree Consulting been a Director of QA and is well- seasoned in preparing department Preparing the QA Budget, wide budgets. Effort Test Activities – Part 1 This session will be an introduction to preparing a test team budget not only from a manpower perspective but Sreekanth Singaraju has more also from the perspective of testing effort estimation than 12 years of senior technology and forecasting. The speaker will be pulling from real- leadership experience and leads life scenarios that have covered a myriad of situations Alliance’s QA Testing organization of creating or inheriting a budget for testing services. in developing cutting edge solutions. Using these experiences, the speaker will highlight good practices and successes in addition to pitfalls to avoid. James Sivak has been in the computer How to develop a budget for both project based (large technology field for over 35 years, enterprise wide projects through mid-sized) and portfolio/ beginning with the Space Shuttle program testing services support across the organization and over the years has encompassed will be presented. In part one, the financial/accounting warehouse systems, cyclotrons, operating aspects of a budget (high-level) will be discussed and systems, and now virtual desktops. how full-time equivalents (FTEs) are truly accounted; the details of a “fully loaded” resource will be explained; Andy Tinkham is an expert consultant, and the difference between capital and operational focusing on all aspects of quality and expenditures and potential tax effects will be presented. software testing (but most of all, test We will also develop a resource estimation model that automation). Currently, he consults for represents real-world situations and introduce confidence a Magenic, a leading provider of .NET and levels/iterations of a budget/resource plan. and testing services. Session Takeaways: n ow H to prepare a QA/Testing Department Budget n ow H to create an estimation model 102 James Sivak, Director of QA, Unidesk Testing with Chaos Testers can become so focused on the testing at hand and how to make that as efficient as possible (generally driven by management directives) that environments and tests unconsciously get missed. Broadening the vision to include ideas from chaos and complexity theories can help to discover why certain classes of bugs only get found by the customer. This presentation will introduce these ideas and how they can be applied to tests and test environments. Incorporating randomness into tests register www.STPCon. com or call 877.257.9531 Page 12

- 13. C O N F E R E N C E F A L L 2 01 2 and the test environments will be discussed. In addition, With the commercialization of IT, mobile apps are thoughts on what to analyze in customer environments, increasingly becoming a standard format of delivering looking through the lens of complexity, will be presented. applications in enterprises alongside Web applications. Comparisons of environments with and without facets Testing organizations in these enterprises are facing the of chaos will be detailed to give the audience concrete prospect of adding new testing interfaces and devices to examples. Other examples of introducing randomness the long list of existing needs. This session will showcase into tests will be provided. an approach and framework to developing a successful You will walk away with ideas on why and how to add mobile test automation strategy using real life examples. chaos to your testing, catching another class of bugs Using a framework developed by integrating Robotium before your customers do. and Selenium, this session will walk participants through an approach to developing an integrated test automation Session Takeaways: framework that can test applications with web and n deas I on what makes testing and test mobile interfaces. environments complex n enefits of adding chaos to the testing This session highlights the following key aspects of B n ision perspectives in viewing current test developing an integrated automation framework: V environments and how to disrupt the testing paradigm n obile M test automation strategy n est T data management n eporting R 103 Seth Eliot, Senior Knowledge Engineer in Test, Microsoft n est Lab configuration, including multi-device T management n hallenges, lessons learned and best practices C A to Z Testing in Production: to develop a successful framework Industry Leading Techniques to Leverage Big Data for Quality Testing in production (TiP) is a set of software methodologies that derive quality assessments not from 105 Andy Tinkham, Principal Consultant, Magenic test results run in a lab but from where your services actually run – in production. The big data pipe from real Test Like a Ninja: Hands-On users and production environments can be used in a Quick Attacks way that both leverages the diversity of production while In this session, we’ll talk about quick attacks – small tests mitigating risks to end users. By leveraging this diversity that can be done rapidly to find certain classes of bugs. of production we are able to exercise code paths and use These tests are easy to run and require little to no upfront cases that we were unable to achieve in our test lab or planning, which makes them ideal for quickly getting did not anticipate in our test planning. feedback on your application under test. In this session, we’ll briefly talk about what quick tests are and then dive This session introduces test managers and architects to TiP and gives these decision makers the tools to develop into specific attacks, trying them on sample applications a TiP strategy for their service. Methodologies like (or maybe even some real applications!). This is a hands- on session, so bring a laptop or pair with someone who Controlled Test Flights, Synthetic Test in Production, Load/ Capacity Test in Production, Data Mining, Destructive has one, and try these techniques live in the session! Testing and more are illustrated with examples from Session Takeaways: Microsoft, Netflix, Amazon, and Google. Participants will A n knowledge of some quick tests that you see how these strategies boost ROI by moving focus to can use immediately in your own testing live site operations as their signal for quality. n pecific tools and techniques to execute S these quick tests n xperience executing the quick tests E 104 SreekanthAlliance Global Services Services, Singaraju, VP of Testing n eferences for further exploration of quick R tests and how they can be applied Mobile Test Automation for Enterprise Business Applications Page 13

- 14. 1 2 : 0 0 p m – 1 : 1 5 p m Session block 2 Tuesday Wayne Ariola is Vice President of Strategy and Corporate Development at 16 October Parasoft, a leading provider of integrated software development management, quality lifecycle management, and dev/ test environment management solutions. 201 Test Consulting, MindTree Consulting Kerry Field has over 35 years of Paul Fratellone, Program Director Quality experience in IT, including applications development, product and service support, systems and network Preparing the QA Budget, Effort Test Activities – Part 2 management, functional QA, capacity planning, and application performance. In Part 2 of this session, the speaker will showcase excel-based worksheets to demonstrate test effort, test Paul Fratellone, a 25 year career cycles and test case modeling techniques and how to veteran in quality and testing, has introduce confidence in escalating levels/iterations of a been a Director of QA and is well- budget as information increases in stability and accuracy seasoned in preparing department throughout a project/testing support activity. Covered wide budgets. will also be the effects of the delivery life cycle methods and budgeting. How will testing budgets differ between Lia Johnson began her career at the CIA waterfall and agile delivery methods/life cycles? and NASA as a software developer upon Session Takeaways: graduation from Lamar University with n How to budget for test cycles a BBA in Computer Science and is now n ealing D with Agile vs. Waterfall project budgets working for Baker Hughes Incorporated as a Software Testing Manager. Robert Walsh, a proponent of Agile software development processes 202 Lia Johnson, Manager, Drilling Inc. Software Testing, Baker Hughes Evaluation and techniques, believes strongly in delivering quality solutions that solve Software Reliability, real customer problems and provide The Definitive Measure of Quality tangible business value. Throughout the project life-cycle, measures of quality, test success, and test completion are indicative of progress. The culmination of these metrics is often difficult for some stakeholders to translate for acceptance. Software reliability dependencies begin with model driven tests derived from use- cases/user stories in iterative or agile development methodologies. Aligning the test process with a project’s development methodology and in many cases PDM is integral to achieving acceptable levels of software reliability. Relevant dependencies that impact software reliability begin with test first, test continuously. Some dependencies are within the scope of responsibility for software test professionals. Others require collaboration with the entire project team. Developing applications according to standard coding practices is also essential to attain software quality. Unit tests developed and executed successfully impact software quality. These dependencies and more will be discussed as we explore register www.STPCon. com the definitive measure of quality, software reliability. or call 877.257.9531 Page 14

- 15. C O N F E R E N C E F A L L 2 01 2 Session Takeaways: others to succeed. Each pillar is the responsibility of a n he T Definitive Measure of Quality different group within the Agile development team, and n he T Importance of Process Alignment to when everyone does his part, the result is a solution Development Methodologies where the whole is greater than the sum of the parts. n ependencies of Software Reliability D Session Takeaways: n ain G an understanding of the challenges faced when testing in Agile environments 203 KerryManagementSpecialist, Bank CSS Field, APM Team, US n earn how the four pillars provide a solid foundation L on which a successful testing organization can be built CSI: Miami – Solving Application n earn why the pillars are interdependent and L Performance Whodunits understand why the absence of one leaves a gap in the testing effort Application performance problems continue to happen despite all the resources companies employ to prevent them. When the problem is difficult to solve, the organization 205 Parasoft Wayne Ariola, VP of Strategy, will often bring in outside expertise. We see a similar theme played out on TV detective shows. When the local authorities are baffled, the likes of a Jim Rockford, Lt. Columbo, or Complement Virtual Test Labs with Adrian Monk are brought in. These fictional detectives Service Virtualization routinely solved difficult cases by applying their unique This session explains why service virtualization is the powers of observation, intuition, and deductive reasoning. perfect complement to virtual test labs – especially The writers also gave each character a unique background when you need to test against dependent applications or odd personality trait that enhanced their entertainment that are difficult or costly to access and/or difficult to value. Solving real world performance problems requires configure for your testing needs. much more than a combination of individual skills and an entertaining personality. This session will present examples Virtual test labs are ideal for “staging” dependent of real life application performance issues taken from the applications that are somewhat easy to access and have author’s case files to illustrate how successful resolution low to medium complexity. However, if the dependent depends on effective teamwork, sound troubleshooting application is very complex, difficult to access, or process, and appropriate forensic tools. expensive to access, service virtualization might be a better fit for your needs. Service virtualization fills the gap around the virtual test lab by giving your 204 Robert Walsh, Senior Consultant, Excalibur Solutions, Inc. team a virtual endpoint in order to complete your test environment. This way, the developers and testers can access the necessary functionality whenever they Building a Solid Foundation For want, as frequently as they want, without incurring any Agile Testing access fees. For another example, assume you have Software testing in Agile development environments a mainframe that’s easily accessible, but difficult to has become a popular topic recently. Some believe configure for testing, service virtualization fills the gap that conventional testing is unnecessary in Agile by providing flexible access to the components of the environments. Further, some feel that all testing in Agile mainframe that you need to access in order to exercise should be automated, diminishing both the role and the the application under test. It enables the team to test vs. value of the professional tester. While automated testing a broad array of conditions – with minimal setup. is essential in Agile methodologies, manual testing has a Session Takeaways: significant part to play, too. n emove R roadblocks for performance testing, Successful Agile testing depends on a strategy built functional testing Agile/parallel development on four pillars: automated unit testing, automated n lose the gap that exists with incomplete or C acceptance testing, automated regression testing, and capacity-constrained staged test environments manual exploratory testing. The four are interdependent, n treamline test environment provisioning time and S and each provides benefits that are necessary for the costs beyond traditional virtualization Page 15

- 16. 2 : 3 0 p m – 3 : 4 5 p m Session block 3 Tuesday Dan Downing teaches load testing and over the past 13 years has led 16 October hundreds of performance projects on applications ranging from eCommerce to ERP and companies ranging from startups to global enterprises. A Software Tester for the past 15 years, Brian Gerhardt started off in production 301 KeithCenter, Barclays Head of Global Test Klain, Director, support where his troubleshooting skills and relentless search for answers lead Leading Cultural Change in A Community of Testers to a career in Quality Assurance. He is currently with Liquidnet. When Keith Klain took over the Barclays Global Test Center, he found an organization focused entirely on Karen N. Johnson is an independent managing projects, managing processes, and managing software test consultant and a stakeholders – the last most unsuccessfully. Although the contributing author to the book, team was extremely proficient in test management, their Beautiful Testing and is the co-founder misaligned priorities had the effect of continually hitting of the WREST workshop. the bullseye on the wrong target. Keith immediately implemented changes to put a system in place to foster Keith Klain is the head of the testing talent and drive out fear – abandoning worthless Barclays Global Test Center, which metrics and maturity programs, overhauling the training provides functional and non- regime, and investing in a culture that rewards teamwork functional software testing services and innovation. The challenges of these monumental to the investment banking and wealth changes required a new kind of leadership – something management businesses. quite different from traditional management. Sherri Sobanski is currently the Senior Find out how Keith is leading the Barclays Global Strategic Architect Advisor focusing on Test Center and hear his practical experiences defining technical test strategy and innovation objectives and relating them to people’s personal goals. supporting Aetna’s testing team. Sherri Learn about the Barclays Capital Global Test Center’s is also the acting head of Aetna’s “Management Guiding Principles” and how you can Mobile Testing Capability Center. adapt these principles to lead you and your team to a new and better place. 302 Karen N. Test Management, Inc. Software Johnson, Founder, Software Testing Heuristics Mnemonics Are you curious about heuristics and how to use them? In this session, Karen Johnson explains what a heuristic is, what a mnemonic is, and how heuristics and mnemonics are sometimes used together. A number of both heuristics and mnemonics have been created in the software testing community and Karen reviews several of each and gives examples of how to use and apply heuristics and mnemonics. In this session, Karen outlines how to create your own mnemonics and heuristics. She also explores ways to use both as a way to guide register www.STPCon. com exploratory testing efforts. or call 877.257.9531 Page 16

- 17. C O N F E R E N C E F A L L 2 01 2 303 305 Quality Lead, Liquidnet Dan Downing, Principal Consultant, Brian Gerhardt, Operations and Mentora Group Interpreting Performance The Trouble with Troubleshooting Test Results – Part 1 One of the most valuable skill sets a software tester You’ve worked hard to define, develop and execute a can possess is the ability to quickly track down the root performance test on a new application to determine its cause of a bug. A tester may describe what the bug behavior under load. Your initial test results have filled appears to be doing, but far too often it is not enough a couple of 52 gallon drums with numbers. What next? information for developers, causing a repetitive code/ Crank out a standard report from your testing tool, send build/test/re-code/re-build/re-test cycle to ensue. In it out, and call yourself done? NOT. Results interpretation this interactive session, you will rotate between learning is where a performance tester earns the real stripes. investigative techniques and applying them to actual In the first half of this double session we’ll start by examples. You will practice reproducing the bug, looking at some results from actual projects and together isolating it, and reporting it in a concise, clear manner. puzzle out the essential message in each. This will be a This session will present several investigative methods highly interactive session where I will display a graph, and show how they can be used as a means of provide a little context, and ask “what do you see here?” information gathering in determining the root cause We will form hypotheses, draw tentative conclusions, of bugs. Common tests that are designed to eliminate determine what further information we need to confirm false leads as well as tests that can be used to focus them, and identify key target graphs that give us the best in on the suspect code will be shown and discussed insight on system performance and bottlenecks. Feel free in interactive examples. to bring your own sample results (on a thumb drive so Session Takeaways: I can load and display) that we can engage participants in interpreting! n uild B a framework around their software to ease finding root causes of software issues n uickly identify where the most likely cause Q 304 Sherri Sobanski, Aetna Strategic Architect Advisor, Senior of the errors are n se a new, empirical investigative method U for software testing Finding the Sweet Spot – n eliver more concise, relevant information to D Mobile Device Testing Diversity developers and project managers when describing How can you possibly do it all?! Infinite hardware/ and detailing issues in the software, becoming software combinations, BYOD, crowdsourcing, virtual more valuable in making fix/ship decisions capabilities, data security...the list goes on and on. Companies are struggling with insecure, complicated and expensive answers to the mobile testing challenges. The Aetna technical teams have created an innovative mobile testing set of solutions that not only protect data but allow for many different approaches to mobile testing across the lifecycle. Whether you want to test from the Corporate Lab, from your own backyard or halfway around the world, we have a solution…you choose. Session Takeaways: n ow H to tackle the complex nature of mobile testing n echniques T to address BYOD, Offshore Vendors, Crowdsourcing n oftware capabilities for virtual testing of mobile devices S n rivate and public testing clouds P Page 17

- 18. 4 : 4 5 p m – 6 : 0 0 p m Session block 4 Tuesday Bradley Baird is an SQA professional and has worked at several software 16 October companies where he has created SQA departments test labs from scratch, trained test teams and educated stake holders everywhere on the values of SQA. Brian Copeland has been instrumental in the testing of critical business 401 Bradley Quality,Senior Manager Product Baird, Harman International systems, from mission critical applications to commercial software. Don’t Ignore the Man Behind the Curtain Just as in the Wizard of Oz where all the great pyrotechnics going on in public were controlled by the wizard hidden David Dang is an HP/Mercury Certified behinds the curtain, so it is in QA where all the great Instructor (CI) for QuickTest Professional, quality that the customers see is controlled by the QA WinRunner, and Quality Center and professional. If you ignore the “Wizard” you do so at the has provided automation strategy and risk of compromising Quality. This session will cover the implementation plans to maximize ROI perils of ignoring the QA professional behind the curtain. and minimize script maintenance. We spend months or even years training a QA engineer Dan Downing teaches load testing only to see them walk out the door because a better and over the past 13 years has led opportunity came along. You don’t just lose a person you hundreds of performance projects on lose knowledge that is sometimes hard to replace. applications ranging from eCommerce In this session we will explore ideas on how to develop to ERP and companies ranging from management traits and skills that we perform on a daily startups to global enterprises. basis to make sure our wizards keep pulling those levers Over the past 15 years Todd Schultz has and tooting those horns for us instead of our competitor. tested many types of software, in many Session Takeaways: industries. Todd Schultz is passionate n deas I on managing or leading a QA team about professional development; n ow H to treat a human as a person not a resource mentoring Quality Assurance Engineers n ow to give incentives with limited to no resources H who see QA as a career, and not just a job. n ow to add value through happy team members H n ow to show your value to upper management H 402 David Dang,Zenegy Technologies Consultant, Senior Automation Optimizing Modular Test Automation Many companies have recognized the value of test automation frameworks. Appropriate test automation frameworks maximize ROI on automation tools and minimize script maintenance. One of the most common frameworks is the modular test automation approach. This approach uses the same concept as software development: building components or modules shared within an application. For test automation, the modular approach decomposes the application under test into functions or modules. The functions or modules are register www.STPCon. com linked together to form automated test cases. While this or call 877.257.9531 Page 18

- 19. C O N F E R E N C E F A L L 2 01 2 approach encourages reusability and maintainability, organizations have pointed to Agile as the impetus to there are many challenges that must be considered and abandon all vestiges of process, documentation, and in addressed at the start of a project. many ways quality. These organizations have added a fifth principle to the manifesto: Speed of the Delivery over This presentation describes the key factors the QA quality of the delivered. group needs to consider during the design phase of implementing a modular test automation approach. Session Takeaways: The QA group will learn the aspects of the modular test n gile A or Fragile: How to tell which your organization is automation approach, the benefits of implementing a n gile A Characteristics: The characteristics of modular approach, the pitfalls of a modular approach, excellent Agile teams and best practices to fully utilize the modular approach. n gile Manifesto: What does it mean for processes A and documentation? n gile Testing: The role of “independent” testing in A 403 Mentora Group Dan Downing, Principal Consultant, the Agile framework n gile Tools: Do testing tools have a place in A Interpreting Performance Test Agile development? n gile Façade: Strategies for breaking down the façade A Results – Part 2 In the second part of this session, we will try to codify the analytic steps we went through in the first session where we learned to observe, form hypotheses, draw conclusions and take steps to confirm them, and finally 405 Todd Schultz, Managing Senior Quality Assurance Engineer, Deloitte Digital report the results. The process can best be summarized Mobile Software Testing Experience in a CAVIAR approach for collecting and evaluating In this hands-on session, with devices in hand, we will performance test results: investigate the issues that mobile testers deal with on n ollecting C n isualizing V n nalyzing A a daily basis. We will explore both mobile optimized n ggregating A n nterpreting I n eporting R websites and native applications, in the name of We will also discuss an approach for reporting consumer advocacy and continuous improvement and results in a clear and compelling manner, with data- gain a new perspective on the mobile computing era as supported observations, conclusions drawn from these we arm ourselves with the knowledge needed to usher in observations, and actionable recommendations. A link a quality future for this emerging market. will be provided to the reporting template that you can This session will focus on three of the biggest challenges adopt or adapt to your own context. Come prepared to in mobile application testing: participate actively! n evice D Testing – Device fragmentation is a challenge that will continue to increase, and full device 404 Brian Copeland, QAGroup Director, Northway Solutions Practice coverage is often cost prohibitive. We will investigate how device fragmentation affects quality and learn the considerations involved in device sampling size. Agile vs Fragile: A Disciplined n onnectivity Testing – Although some applications C Approach or an Excuse for Chaos have some form of offline mode, most were The Manifesto for Agile Software Development was designed with constant connection in mind and can signed over a decade ago and establishes a set of be near useless without a connection to the internet. principles aimed at increasing the speed at which We will investigate how vital connectivity is to the customers can realize the value of a development proper functioning of many mobile applications and undertaking. While the principles of Agile are a explore various testing methodologies. refreshing focus on delivery, they are often hijacked to n nterrupt Testing – Phone calls, text messages, alarms, I become an excuse for the undisciplined development push notifications and other functionality within our organization. The Agile framework’s focus on agility is devices can have a serious impact on the application anything but undisciplined with principles such as Test under test. We will investigate the types of issues these Driven Development (TDD); however rogue development interruptions can cause and some strategies for testing. Page 19

- 20. 1 0 : 3 0 a m – 1 1 : 4 5 a m Session block 5 Doug Hoffman is a trainer in strategies part of this inaugural effort. The purpose of this two part for QA with over 30 years of experience. session is to review a high level study of the building of a His technical focus is on test automation Test Center of Excellence within a mature IT organization and test oracles. His management focus that will be informative for companies of various sizes and is on evaluating, recommending, and growth related to Quality Assurance maturity. leading of quality improvement programs. Session Part 1 Brad Johnson has been supporting n he T Startup – this session will describe how the testers since 2000 as head of monitoring testing center was formed initially, steps taken and test products at Compuware, to build the team, baselining the process and Mercury Interactive and Borland and methodology documentation, initial metrics planned is now with SOASTA where he delivers for reporting, the process involved in selecting the cloud testing on the CloudTest platform. pilot business areas that would be supported and Dwight Lamppert has over 15 years how we prepared the organization for the changes. n hat Worked – we will review the decisions made W of software testing experience in the financial services industry and currently during the initial stages, those decisions that stood manages Software Testing Process with the team throughout the process, and how the Metrics at Franklin Templeton. organization was socialized with the stakeholders Session Takeaways: Mike Lyles has 19 years of IT n ocumenting D core processes required to build a team experience. His current role comprises n electing S the appropriate team member skill sets Test Management responsibilities for a when building a team major company domain covering Store n dentifying core requirements when selecting a I Systems, Supply Chain, Merchandising testing vendor and Marketing. n stablishing core metrics needed for the organization E n electing the pilot group/business area for testing services S Mark Tomlinson is a software tester and test engineer. His first test project in 1992 502 Dwight Lamppert,Templeton sought to prevent trains from running into Senior Test each other – and Mark has metaphorically Manager, Franklin been preventing “train wrecks” for his customers for the past 20 years. How to Prevent Defects Wednesday The session shows how to prevent defects from leaking to later stages of the SDLC. This is a successful case study in which awareness and focus on static testing 17 October was increased over a period of 2 years. Defect detection and leakage removal metrics were tracked for each of the projects. The first 2 projects exposed some issues. The metrics were shared and discussed with business 501 Mike Lyles, QA Manager, Lowe’s Companies, Inc. partners – and various process improvements were implemented. The results on the 4 subsequent projects showed marked reductions in defects that leaked into Building A Successful Test Center system testing and production. The quality improvement of Excellence – Part 1 also contributed to less cost and shorter timelines In 2008, Lowe’s initiated a Quality Assurance group because re-work was controlled. dedicated to independent testing for IT. This was Lowe’s Session Takeaways: first time separating development and testing efforts for the 62-year-old company. Mike Lyles was privileged to be A n focus on documentation reviews, walk-throughs, and inspections is a critical first step for building quality into the code early in the SDLC register www.STPCon. com or call 877.257.9531 Page 20