Web scraping with nutch solr

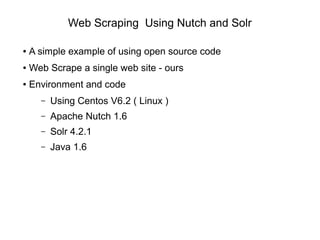

- 1. Web Scraping Using Nutch and Solr ● A simple example of using open source code ● Web Scrape a single web site - ours ● Environment and code – Using Centos V6.2 ( Linux ) – Apache Nutch 1.6 – Solr 4.2.1 – Java 1.6

- 2. Nutch and Solr Architecture ● Nutch processes urls and feeds content to Solr ● Solr indexes content

- 3. Where to get source code ● Nutch – http://nutch.apache.org ● Solr – http://lucene.apache.org/solr ● Java – http://java.com

- 4. Installing Source - Nutch ● Nutch is delivered as – apache-nutch-1.6-bin.tar ( 64M ) – apache-nutch-1.6-src.tar ( 20M ) ● Copy each tar file to your desired location ● Install each tar file as – tar xvf <tar file> ● Second tar file optional

- 5. Installing Source - Solr ● Solr is delivered as – solr-4.2.1.zip ( 116M ) ● Copy file to your desired location ● Install each tar file as – unzip <zip file>

- 6. Configuring Nutch Part 1 ● Assuming we will crawl a single web site ● Ensure that JAVA_HOME is set ● cd apache-nutch-1.6 ● Edit agent name in conf/nutch-site.xml <property> <name>http.agent.name</name> <value>Nutch Spider</value> </property> ● mkdir -p urls ; cd urls ; touch seed.txt

- 7. Configuring Nutch Part 2 ● Add following url ( ours ) to seed.txt – http://www.semtech-solutions.co.nz ● Change url filtering in conf/regex-urlfilter.txt, change the line – # accept anything else – +. – To be – +^http://([a-z0-9]*.)*semtech-solutions.co.nz/ ● This means that we will filter the urls found to only be from the local site

- 8. Configuring Solr Part 1 ● cd solr-4.2.1/example/solr/collection1/conf ● Add some extra fields to schema.xml after _version_ field i.e.

- 9. Start Solr Server – Part 1 ● Within solr-4.2.1/example ● Run the following command ● java -jar start.jar ● Now try to access admin web page for solr – http://localhost:8983/solr/admin ● You should now see the admin web site – ( see next page )

- 10. Start Solr Server – Part 2 ● Solr Admin web page

- 11. Run Nutch / Solr ● We are ready to crawl our first web site ● Go to apache-nutch-1.6 directory ● Run the following commands – touch nutch_start.bash – chmod 755 nutch_start.bash – vi nutch_start.bash ● Add the text to the file #!/bin/bash bin/nutch crawl urls -solr http://localhost:8983/solr/ -dir crawl -depth 3 -topN 3

- 12. Run Nutch / Solr ● Now run the nutch bash file – ./nutch_start.bash ● Select the Logging option on the admin console ● Monitor for errors in Logging console ● The crawl should finish with no errors and the line – Crawl finished: crawl – In the crawl window

- 13. Check Crawled Data ● Now we check the data that we have crawled ● In Admin Console window – Set Core Selector to collection1 – Select the Query option – Click execute query button ● You should now see some of the data that you have crawled

- 14. Crawled Data ● Crawled data in solr query

- 15. Crawled Data ● Thats your first simple crawl completed ● Further reading at – http://nutch.apache.org – http://lucene.apache.org/solr ● Now you can – Add more urls to your seed.txt – Increase the depth of your link search via options ● -depth ● -topN – Modify your url filtering

- 16. Contact Us ● Feel free to contact us at – www.semtech-solutions.co.nz – info@semtech-solutions.co.nz ● We offer IT project consultancy ● We are happy to hear about your problems ● You can just pay for those hours that you need ● To solve your problems