Video summarization using clustering

- 1. Video Summarization Using Clustering Sachin DTU/2K12/EC-149 Mentor: Mr. Avinash Ratre

- 2. Introduction We have seen YouTube and other media sources pushing the bounds of video consuming in the past few years. As media sources compete for more of a viewer’s time everyday, one possible alleviation is a video summarization system. A movie teaser is an example of a video summary. However, not everyone has the time to edit their videos for a concise version. This presentation highlights a fast and efficient algorithm using k-means clustering with RGB histograms for creating a video summary. It is aimed particularly at low quality media, specifically YouTube videos.

- 3. Approach 1. 2. 3. 4. 5. 6. Split the input file into time segments of k seconds: f0...fn. Take the first frame of each segment. Let this frame be representative of the segment. We assign it Compute the histograms from x0....xn and assign it y0...yn. Cluster the histograms(y0....yn) into k groups using K-Means. Euclidean distance will be the error function. Round robin for segment selection: Iterate through the k groups and select a segment randomly from a cluster, add it to list l until the number of desired segments are chosen. Join list l of segments together to generate a video summary.

- 5. Feature Selection • We selected RGB color histograms for our feature comparator due to its global nature and speed of processing. Histograms are a good trade-off between accuracy and speed. • Histogram is a frequency approach where it compresses the information of a video frame into a vector. • The majority of YouTube videos are lower quality so extracting more challenging features tends to be more difficult. Histograms can perform well because they do not attempt to infer any semantic meaning in the actual segments.

- 6. Algorithm Group all the similar histograms into the k clusters. Each histogram is representative of the corresponding video segment. K-means algorithm is defined below: 1. Select k random centroid points on our multi-dimensional space. 2. Compute each histogram against all the cluster centroids 3.Each histogram is assigned to the cluster that minimizes the error function. 4.Recompute cluster centroids. 5.On every iteration, check to see if the centroids converged. If not, we go to step 2.

- 7. Error function We use Euclidean distance as our error function. This is the general approach when directly comparing histograms. Additionally, we also experimented with the cosine similarity and saw no noticeable difference in the clustering output.

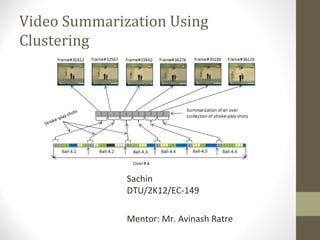

- 8. Results • We selected k = 8 as our k-means parameter and use 20 segments for the output video

- 9. Dataset Following YouTube videos in our system. All of these videos are 320x240. •MotoGP: Recent round of the world motorcycle racing series. This represents a typical sports video. •Man Vs Wild Episode.

- 10. Clusters Generated • When we clustered the MotoGP clip, it was able to separate all the action footage from the pit stand footage. This is particularly useful for viewers who only want to watch the race and not the pit stand. • The Man vs Wild episode was able to correctly cluster different segments. It particularly helped that the uniquely identifying segments had much color similarity. When the Bear(the main actor) was in the desert, the colors are populated with a higher color intensity. Similarly, when he was in the Florida everglades, the colors are lower in intensity.

- 11. MotoGP clusters

- 12. Man vs Wild clusters

- 13. Problems • Repeated segments When a static image is present for a long time, two or more segments will be created from this image. During the clustering, all of the segments with the static image will be clustered in the same group. • Background In the MotoGP video clip, the majority of the segments consists of the road in the background. Our algorithm grouped most of these shots into one cluster. The intended behavior would be to capture the different teams into different clusters because each team has a unique color scheme. However, the background dominated and grouped most of these segments together. It would interesting future work to see if two levels of clustering would be helpful: one for the initial segments and another sub-clustering for within each set.

- 14. Conclusion We have presented a system to automatically create a summarized video from a YouTube video. K-means is a simple and effective method for clustering similar frames together. Our system is modular in design so future work can be developed by substituting in variouscomponents. Instead of using histograms, future work can try to use other features suchas motion vectors or even audio. However, we have demonstrated that a simple feature with a simple unsupervised learning technique can be a good starting point for a video summarization system.

- 15. References • Video Summarization Using Clustering By Tommy Chheng, Department of Computer Science,University of California, Irvine • A User Attention Model for Video Summarization By Yu-Fei Ma, Lie Lu, Hong-Jiang Zhang and Mingjing Li Microsoft Research Asia