Markov chain intro

•Descargar como PPT, PDF•

5 recomendaciones•2,668 vistas

Markov chain analysis

Denunciar

Compartir

Denunciar

Compartir

Recomendados

Recomendados

Más contenido relacionado

La actualidad más candente

La actualidad más candente (20)

Arima Forecasting - Presentation by Sera Cresta, Nora Alosaimi and Puneet Mahana

Arima Forecasting - Presentation by Sera Cresta, Nora Alosaimi and Puneet Mahana

Implement principal component analysis (PCA) in python from scratch

Implement principal component analysis (PCA) in python from scratch

Destacado

Destacado (6)

Similar a Markov chain intro

Similar a Markov chain intro (9)

Monte Caro Simualtions, Sampling and Markov Chain Monte Carlo

Monte Caro Simualtions, Sampling and Markov Chain Monte Carlo

Hidden Markov Models with applications to speech recognition

Hidden Markov Models with applications to speech recognition

Hidden Markov Models with applications to speech recognition

Hidden Markov Models with applications to speech recognition

Último

Último (20)

Dubai Call Girls Peeing O525547819 Call Girls Dubai

Dubai Call Girls Peeing O525547819 Call Girls Dubai

Vadodara 💋 Call Girl 7737669865 Call Girls in Vadodara Escort service book now

Vadodara 💋 Call Girl 7737669865 Call Girls in Vadodara Escort service book now

Charbagh + Female Escorts Service in Lucknow | Starting ₹,5K To @25k with A/C...

Charbagh + Female Escorts Service in Lucknow | Starting ₹,5K To @25k with A/C...

TrafficWave Generator Will Instantly drive targeted and engaging traffic back...

TrafficWave Generator Will Instantly drive targeted and engaging traffic back...

5CL-ADBA,5cladba, Chinese supplier, safety is guaranteed

5CL-ADBA,5cladba, Chinese supplier, safety is guaranteed

Jual Obat Aborsi Surabaya ( Asli No.1 ) 085657271886 Obat Penggugur Kandungan...

Jual Obat Aborsi Surabaya ( Asli No.1 ) 085657271886 Obat Penggugur Kandungan...

Top profile Call Girls In Bihar Sharif [ 7014168258 ] Call Me For Genuine Mod...![Top profile Call Girls In Bihar Sharif [ 7014168258 ] Call Me For Genuine Mod...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Top profile Call Girls In Bihar Sharif [ 7014168258 ] Call Me For Genuine Mod...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Top profile Call Girls In Bihar Sharif [ 7014168258 ] Call Me For Genuine Mod...

DATA SUMMIT 24 Building Real-Time Pipelines With FLaNK

DATA SUMMIT 24 Building Real-Time Pipelines With FLaNK

Top Call Girls in Balaghat 9332606886Call Girls Advance Cash On Delivery Ser...

Top Call Girls in Balaghat 9332606886Call Girls Advance Cash On Delivery Ser...

Top profile Call Girls In Chandrapur [ 7014168258 ] Call Me For Genuine Model...![Top profile Call Girls In Chandrapur [ 7014168258 ] Call Me For Genuine Model...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Top profile Call Girls In Chandrapur [ 7014168258 ] Call Me For Genuine Model...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Top profile Call Girls In Chandrapur [ 7014168258 ] Call Me For Genuine Model...

Top profile Call Girls In Latur [ 7014168258 ] Call Me For Genuine Models We ...![Top profile Call Girls In Latur [ 7014168258 ] Call Me For Genuine Models We ...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Top profile Call Girls In Latur [ 7014168258 ] Call Me For Genuine Models We ...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Top profile Call Girls In Latur [ 7014168258 ] Call Me For Genuine Models We ...

Predicting HDB Resale Prices - Conducting Linear Regression Analysis With Orange

Predicting HDB Resale Prices - Conducting Linear Regression Analysis With Orange

Top profile Call Girls In bhavnagar [ 7014168258 ] Call Me For Genuine Models...![Top profile Call Girls In bhavnagar [ 7014168258 ] Call Me For Genuine Models...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Top profile Call Girls In bhavnagar [ 7014168258 ] Call Me For Genuine Models...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Top profile Call Girls In bhavnagar [ 7014168258 ] Call Me For Genuine Models...

Aspirational Block Program Block Syaldey District - Almora

Aspirational Block Program Block Syaldey District - Almora

SAC 25 Final National, Regional & Local Angel Group Investing Insights 2024 0...

SAC 25 Final National, Regional & Local Angel Group Investing Insights 2024 0...

Markov chain intro

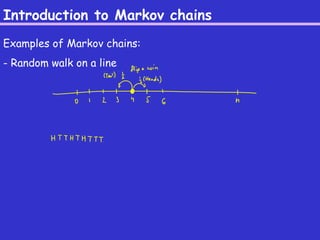

- 1. Introduction to Markov chains Examples of Markov chains: - Random walk on a line

- 2. Introduction to Markov chains Examples of Markov chains: - Random walk on a graph

- 3. Introduction to Markov chains Examples of Markov chains: - Random walk on a hypercube

- 4. Introduction to Markov chains Examples of Markov chains: - Markov chain on graph colorings

- 5. Introduction to Markov chains Examples of Markov chains: - Markov chain on graph colorings

- 6. Introduction to Markov chains Examples of Markov chains: - Markov chain on graph colorings

- 7. Introduction to Markov chains Examples of Markov chains: - Markov chain on matchings of a graph

- 8. Definition of Markov chains - State space W - Transition matrix P, of size |W|x|W| - P(x,y) is the probability of getting from state x to state y - åy2W P(x,y) =

- 9. Definition of Markov chains - State space W - Transition matrix P, of size |W|x|W| - P(x,y) is the probability of getting from state x to state y - åy2W P(x,y) =

- 10. Definition of Markov chains Example: frog on lily pads

- 11. Definition of Markov chains Stationary distribution ¼ (on states in W): Example: frog with unfair coins

- 12. Definition of Markov chains Other examples:

- 13. Definition of Markov chains - aperiodic - irreducible - ergodic: a MC that is both aperiodic and irreducible Thm (3.6 in Jerrum): An ergodic MC has a unique stationary distribution. Moreover, it converges to it as time goes to 1.

- 14. Gambler’s Ruin A gambler has k dollars initially and bets on (fair) coin flips. If she guesses the coin flip: gets 1 dollar. If not, loses 1 dollar. Stops either when 0 or n dollars. Is it a Markov chain ? How many bets until she stops playing ? What are the probabilities of 0 vs n dollars ?

- 15. Gambler’s Ruin Stationary distribution ?

- 16. Gambler’s Ruin Starting with k dollars, what is the probability of reaching n ? What is the probability of reaching 0 ?

- 17. Gambler’s Ruin How many bets until 0 or n ?

- 18. Coupon Collecting - n coupons - each time get a coupon at random, chosen from 1,…,n (i.e., likely will get some coupons multiple times) - how long to get all n coupons ? Is this a Markov chain ?

- 19. Coupon Collecting How long to get all n coupons ?

- 20. Coupon Collecting How long to get all n coupons ?

- 21. Coupon Collecting Proposition: Let ¿ be the random variable for the time needed to collect all n coupons. Then, Pr(¿ > d n log n + cn e) · e-c.

- 22. Random Walk on a Hypercube How many expected steps until we get to a random state ? (I.e., what is the mixing time ?)

- 23. Random Walk on a Hypercube How many expected steps until we get to a random state ? (I.e., what is the mixing time ?)