Más contenido relacionado Similar a RTC Analyst Paper: ESG - IBM Real-time Compression Optimizes Primary Storage (2008) (20) Más de IBM India Smarter Computing (20) 1. Product Brief

IBM Real-time Compression Optimizes Primary Storage

Date: December 2008 Author: Lauren Whitehouse, Senior Analyst

Abstract: Explosive storage growth is increasing capital and operational costs for primary and secondary storage.

IBM Real-time Compression delivers a platform for improving the economics of transferring and storing primary and

secondary data without sacrificing performance while complementing “downstream” capacity optimization

technologies.

Overview

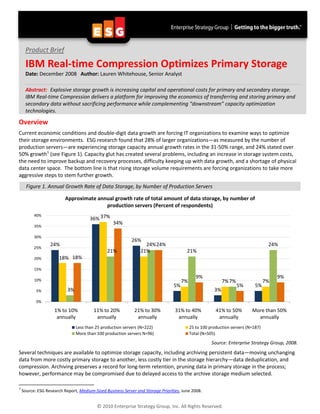

Current economic conditions and double-digit data growth are forcing IT organizations to examine ways to optimize

their storage environments. ESG research found that 28% of larger organizations—as measured by the number of

production servers—are experiencing storage capacity annual growth rates in the 31-50% range, and 24% stated over

50% growth 1 (see Figure 1). Capacity glut has created several problems, including an increase in storage system costs,

the need to improve backup and recovery processes, difficulty keeping up with data growth, and a shortage of physical

data center space. The bottom line is that rising storage volume requirements are forcing organizations to take more

aggressive steps to stem further growth.

Figure 1. Annual Growth Rate of Data Storage, by Number of Production Servers

Approximate annual growth rate of total amount of data storage, by number of

production servers (Percent of respondents)

40%

36% 37%

34%

35%

30%

26%

24% 24% 24% 24%

25%

21% 21% 21%

20% 18% 18%

15%

9% 9%

10% 7% 7% 7% 7%

5% 5% 5%

5% 3% 3%

0%

1% to 10% 11% to 20% 21% to 30% 31% to 40% 41% to 50% More than 50%

annually annually annually annually annually annually

Less than 25 production servers (N=222) 25 to 100 production servers (N=187)

More than 100 production servers N=96) Total (N=505)

Source: Enterprise Strategy Group, 2008.

Several techniques are available to optimize storage capacity, including archiving persistent data—moving unchanging

data from more costly primary storage to another, less costly tier in the storage hierarchy—data deduplication, and

compression. Archiving preserves a record for long-term retention, pruning data in primary storage in the process;

however, performance may be compromised due to delayed access to the archive storage medium selected.

1

Source: ESG Research Report, Medium-Sized Business Server and Storage Priorities, June 2008.

© 2010 Enterprise Strategy Group, Inc. All Rights Reserved.

2. Product Brief: IBM Real-time Compression Optimizes Primary Storage 2

Most of the hype around optimizing storage capacity has been focused on data deduplication for secondary disk storage.

Data deduplication identifies and eliminates redundant data. After the data is initially seeded on a secondary storage

device, subsequently written data is examined for redundancy. Replicate data is not written again; a pointer to the

duplicate data already stored is written instead. As the largest opportunity for redundancy elimination (copies of the

same data sets are made every day and, in some cases, at multiple points within a day for data recovery purposes) it

made sense for organizations to attack this problem set first. While deduplication performance is important to keep

backups within prescribed “backup windows,” it is still less of an issue versus the required performance for accessing

primary data. Deduplication is currently being applied to primary storage, but post-process since the inspection,

identification, and redundancy elimination phases of deduplication are processor-intensive, which could affect the

performance of active data sets.

Compression technology, a popular feature of secondary storage software and hardware, modifies data so that it

occupies a smaller amount of storage, but leaves information intact. All of the data is still there, but its footprint has

been reduced in size. A smaller footprint can reduce I/O traffic to/from the storage system, enabling better

performance and lower CPU utilization. Compression ratios are often limited, as the standard compression algorithms

compare incoming data with a smaller amount of historical data than deduplication. Lossless compression is important,

as losing even a single bit of data when decompressing can spell trouble.

When it comes to mission-critical or mission-supporting applications, organizations make copies of their data—via

replication and backup—for data protection and test and development. Therefore, a smaller footprint for primary

storage translates into smaller footprints for copies, creating a cascade effect of efficiency.

IBM Real-time Compression offers a real-time, random access capacity optimization solution that takes advantage of a

standards-based lossless LZ compression algorithm and applies it in a new way: to primary storage. It offers STN-6000

compression appliances for primary network-attached storage (NAS) systems. An STN-6000 appliance sits in the data

path between Windows and Linux clients and NAS system(s), compressing incoming data in real time before it is written

to disk. Prime use cases for the technology today include Oracle databases and virtual server environments where

capacity growth is an issue and compression can have an impact. With a 15:1 compression ratio, IBM Real-time

Compression enables 15 times more data to be stored. Therefore, 10 TB of physical network file capacity can translate

to 150 TB of compressed capacity.

Analysis

ESG research found that for respondents currently using data deduplication technology, approximately one-third (33%)

say they have experienced a less than 10x reduction in capacity requirements, 48% report a 10x-20x reduction, and 18%

report reductions ranging from 21x to more than 100x. 2 The IBM Real-time Compression STN-6000 appliance’s ability to

reduce data 10-20x is in line with current users’ deduplication experience.

Actually, deduplication and compression are complementary. When used together, capacity optimization is amplified.

IBM Real-time Compression compresses the data stored in real-time to achieve optimization, while data deduplication

works to eliminate multiple copies of the same data. For example, if the IBM Real-time Compression STN-6000 is used

with NetApp FAS with A-SIS deduplication in a primary environment, the combined solution offers real-time

compression with block-level data deduplication initiated post-process. Theoretically, if IBM Real-time Compression was

able to achieve even a 10:1 compression ratio and A-SIS achieved a 10:1 deduplication ratio, the combined capacity

optimization would be 100:1. The same would apply for IBM Real-time Compression primary storage optimization

combined with any secondary storage deduplication solution or single-instance archive solution—capacity savings for

“downstream” storage would be improved significantly.

2

Source: ESG Research Report, Data Protection Market Trends, January 2008.

© 2010 Enterprise Strategy Group, Inc. All Rights Reserved.

3. Product Brief: IBM Real-time Compression Optimizes Primary Storage 3

IBM Real-time Compression creates efficiency for new and existing investments. Several key areas of optimization

include:

• Reduction in storage and storage-related operational costs

Capacity optimization can stretch existing capacity (primary, secondary, and archive) and slow down

incremental capacity purchases. Fewer storage systems mean less management, creating savings in

operational overhead.

• Data center environmental concerns, such as power, cooling, and space efficiency

Less physical storage will result in lower floor space requirements, as well as the associated power and

cooling to operate them, enhancing organizations’ Green IT initiatives.

• Improvements in data accessibility

As previously mentioned, capacity optimization can improve the efficiency of the storage system—giving it

less work to do. Greater CPU and disk utilization can improve response times and make the environment

run more efficiently.

• Streamlining data protection—both operational and disaster recovery

Compressed data on primary storage will take up a lot less space on secondary storage, especially if the

secondary storage process includes deduplication. With or without deduplication, less data will be

transferred and stored and more backup data can reside on disk. This will benefit organizations with backup

window constraints and improve recovery objectives as it will be more likely that a recovery will occur from

disk. Similarly, benefits are derived with disaster recovery protection. Site-to-site replication of data will be

streamlined: 1) bandwidth between sites can be optimized, 2) transfer can be accelerated providing a better

time to recovery, and 3) capacity costs at the disaster recovery site can be kept in check.

The IBM Real-time Compression solution tackles many of the perceived drawbacks of applying capacity optimization to

primary storage. Some of the criteria primary storage capacity optimization should be evaluated against include:

Performance: Because compression has traditionally been a performance hog, compressing and

decompressing active data accessed by an application may seem risky. IBM Real-time Compression has

experience developing real-time processing and network optimization algorithms, which it applied to its

inline compression appliance. The result? IBM Real-time Compression can maintain aggregate throughput

of 100 MB/sec per compressed 1 Gb Ethernet interface (with its appliance offering several Ethernet ports).

ESG Lab not only validated these performance results, but also showed that the IBM Real-time Compression

appliance, configured with an Oracle database relying on NAS storage, actually increased the amount of

transactions that the system could handle and improved response times between 20% and 90%, depending

on the type of transaction.3 In addition, the STN-6000 improved CPU and disk utilization, adding to the

overall efficiency of the environment.

Availability: Dropping an appliance in the data path could create a point of failure for the application. If the

appliance fails and access to data is cut off, downtime could be experienced. That’s why IBM Real-time

Compression introduced several high availability features. IBM Real-time Compression offers a high

availability configuration, which allows for failover to a second appliance should the first one fail.

Replication from the primary to a secondary site is another fail-safe. Finally, it offers a software utility

installed on any Windows or Linux server to de-compress data as an added insurance policy.

Non-disruption of existing processes: One hurdle to overcome when introducing new technology into an

environment is ensuring that it doesn’t break anything else and that it is as transparent as possible to

implement. Deploying the IBM Real-time Compression solution is as easy as installing the appliance

between the network of application servers and NAS filer. There are no special software, drivers, or agents

to install or manage on either side of the appliance. No changes are required to the network or storage.

Support for heterogeneous storage and applications: IT environments often consist of a mix of solutions.

The ability to support heterogeneous storage from a variety of manufacturers, as well as different

applications, will be key to exploiting capacity optimization technology. The ability to standardize on a single

3

Source: ESG Lab Validation Report, StorWize: Reducing Storage Capacity and Costs without Compromise, December 2008.

© 2010 Enterprise Strategy Group, Inc. All Rights Reserved.

4. Product Brief: IBM Real-time Compression Optimizes Primary Storage 4

technology across multiple platforms and applications will create economies of scale for ROI, including

savings in deployment, training, and management. Today, IBM Real-time Compression supports any

application running on network-attached file systems from multiple vendors which use CIFS or NFS

protocols.

Proven: Not all organizations are in the position to play “guinea pig” for new technology, especially when a

misstep could cause downtime or put mission-critical data at risk. That’s why many are often distrustful of

solutions that have not been widely deployed. Luckily, the IBM Real-time Compression solution is based on

the long-proven LZ lossless compression algorithm and, importantly, the company has hundreds of

appliances in deployment and reference-able customers.

The Bottom Line

Budget-cutting pressure is forcing organizations of all sizes to deliver the same or improved services in a faster, better,

and, importantly, cheaper way. Capacity optimization—especially for primary storage—is a small investment

organizations can make now to reap savings that will pay off in many ways for years to come.

IBM Real-time Compression has a compelling story and ESG Lab has validated many of its claims. Its primary storage

capacity optimization appliance can significantly change the economics of running and managing an organization’s

primary, secondary, and archive storage systems. While the solution directly improves the efficiency of primary storage,

the cascade effect of efficiency on secondary and archive systems is apparent.

The IBM Real-time Compression appliance doesn’t currently support Fibre Channel or iSCSI block-based protocols,

limiting its addressable market. The positive news for IBM Real-time Compression is that the overwhelming growth area

for capacity is with file-based data. Therefore, as organizations look to lower-cost, easier-to-manage file storage as

budgets are tightened this year, they may also want to investigate capacity optimization solutions such as the one

offered via IBM Real-time Compression.

All trademark names are property of their respective companies. Information contained in this publication has been obtained by sources The Enterprise Strategy

Group (ESG) considers to be reliable but is not warranted by ESG. This publication may contain opinions of ESG, which are subject to change from time to time. This

publication is copyrighted by The Enterprise Strategy Group, Inc. Any reproduction or redistribution of this publication, in whole or in part, whether in hard-copy

format, electronically, or otherwise to persons not authorized to receive it, without the express consent of the Enterprise Strategy Group, Inc., is in violation of U.S.

copyright law and will be subject to an action for civil damages and, if applicable, criminal prosecution. Should you have any questions, please contact ESG Client

Relations at (508) 482-0188.

© 2010 Enterprise Strategy Group, Inc. All Rights Reserved.