3D Multi Object GAN

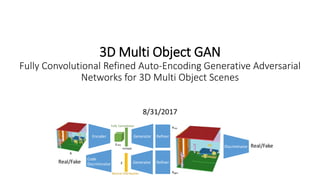

- 1. 3D Multi Object GAN Fully Convolutional Refined Auto-Encoding Generative Adversarial Networks for 3D Multi Object Scenes 8/31/2017 Real/Fake Encoder Generator Fully Convolution x Normal Distribution z Generator Discriminator zenc reshape Code DiscriminatorReal/Fake Refiner Refiner xgen xrec

- 2. Agenda • Introduction • Dataset • Network Architecture • Loss Functions • Experiments • Evaluations • Suggestions of Future Work Source Code: https://github.com/yunishi3/3D-FCR-alphaGAN

- 3. Introduction 3D multi object generative models should be an extremely important tasks for AR/VR and graphics fields. - Synthesize a variety of novel 3D multi objects - Recognize the objects including shapes, objects and layouts Only single objects are generated so far Simple 3D-GANs[1] Simple 3D-VAE[2] Multi Object Scenes

- 4. Dataset • SUNCG dataset Extracted only voxel models from SUNCG dataset -Voxel size: 80 x 48 x 80 (Downsized from 240x144x240) -12 Objects [empty, ceiling, floor, wall, window, chair, bed, sofa, table, tvs, furn, objs] -Amount: around 185000 -Got rid of trimming by camera angles -Chose the scenes that have over 10000 amount of voxels. -No label From Princeton [3]

- 5. Challenges, Difficulties Sparse, so much varieties empty ceiling floor wall window chair bed sofa table tv furnitureobjects 92.466 0.945 1.103 1.963 0.337 0.070 0.368 0.378 0.107 0.009 1.406 0.846 Average occupancy ratio of each objects in dataset [%] [%] Average occupancy ratio Dining room Bedroom Garage Living room

- 6. Network Architecture Fully Convolutional Refined Auto-Encoding Generative Adversarial Networks -Similar architecture with 3DGAN[1] -Encoder, discriminator are almost mirrored from generator -Latent space is fully convolutional layer (5x3x5x16) -Fully convolution enables Zenc to represent more features -Last activation of generator is softmax -> Divide 12 classes -Code discriminator is fully connected (2 hidden layers)[4] -Refiner is similar architecture of simGAN[5] -Multi class activation for multi object scenes -Fully Convolution Novel Contribution Real/Fake Encoder Generator Fully Convolution Normal Distribution z Generator Discriminator zenc reshape Code DiscriminatorReal/Fake Refiner Refiner xgen xrec x

- 7. Network Architecture Inspired by [1] z 5x3x5x512 10x6x10x256 20x12x20x128 40x24x40x64 80x48x80x12 Each Network -3D deconv (Stride:2) -Batch Norm -LRelu(Discriminator) Relu(Encoder, Generator) Last Activation -Softmax 5x3x5x16 Reshape & FC batchnorm relu 5x3x5 stride 2 batchnorm relu Generator Network 5x3x5 stride 2 batchnorm relu 5x3x5 stride 2 batchnorm relu 5x3x5 stride 2 softmax [Wu et al. 2016, MIT]

- 8. Network Architecture Inspired by [5] Each Network -3D deconv (Stride:1) -Relu(Encoder, Generator) -ResNet Block loops 4 times (different weights) Last Activation -Softmax Refiner Network Unlabeled Real Images Synthetic Simulated images Refined Figure5. Exampleoutput of SimGAN for theUnityEyesgazeestimation dataset [40]. (Left) real imagesfrom MPIIGaze[43]. Our refiner network doesnot useany label information from MPIIGazedataset at training time. (Right) refinement resultson UnityEye. The skin texture and the iris region in the refined synthetic images are qualitatively significantly more similar to the real images than to thesynthetic images. More examples areincluded in thesupplementary material. maps Conv f@nxn Conv f@nxn + ReLU ReLU Input Features Output Features Figure6. A ResNet block with two n ⇥n convolutional layers, and 214K real images from the MPIIGaze dataset [43] – samples shown in Figure 5. MPIIGaze is a very chal- lenging eye gaze estimation dataset captured under ex- treme illumination conditions. For UnityEyes we use a single generic rendering environment to generate train- ing data without any dataset-specific targeting. 80x48x80x12 80x48x80x32 80x48x80x32 80x48x80x12 ResNet Block x4 3x3x3 relu 3x3x3 relu 3x3x3 Relu

- 9. Loss / Training Encoder Distribution GAN Loss Reconstruction Loss Discriminator discriminates real and fake scenes accurately Generator fools discriminator ℒ 𝑟𝑒𝑐 = 𝑛 𝑐𝑙𝑎𝑠𝑠 𝑤 𝑛 −𝛾𝑥𝑙𝑜𝑔 𝑥 𝑟𝑒𝑐 − 1 − 𝛾 1 − 𝑥 𝑙𝑜𝑔 1 − 𝑥 𝑟𝑒𝑐 Reconstruction accuracy would be high w is occupancy normalized weights with every batch GAN Loss ℒ 𝐺𝐴𝑁 𝐷 = −log 𝐷 𝑥 − log 1 − 𝐷 𝑥 𝑟𝑒𝑐 − log 1 − 𝐷 𝑥 𝑔𝑒𝑛 ℒ 𝐺𝐴𝑁 𝐺 = − log 𝐷 𝑥 𝑟𝑒𝑐 − log 𝐷 𝑥 𝑔𝑒𝑛 min 𝐸 ℒ = ℒ 𝑐𝐺𝐴𝑁 𝐸 + 𝜆ℒ 𝑟𝑒𝑐 TrainingLoss Generator with refiner min 𝐺 ℒ = 𝜆ℒ 𝑟𝑒𝑐 + ℒ 𝐺𝐴𝑁 𝐺 Discriminator min 𝐷 ℒ = ℒ 𝐺𝐴𝑁 𝐷 Learning rate: 0.0001 Batch size: 20(Base), 8(Refiner) Iteration: 100000 (75000:Base, 25000:Refiner) ℒ 𝑐𝐺𝐴𝑁 𝐷 = −log 𝐷𝑐𝑜𝑑𝑒 𝑧 − log 1 − 𝐷𝑐𝑜𝑑𝑒 𝑧 𝑒𝑛𝑐 ℒ 𝑐𝐺𝐴𝑁 𝐸 = − log 𝐷𝑐𝑜𝑑𝑒 𝑧 𝑒𝑛𝑐 Code discriminator discriminates real and fake distribution accurately Encoder fools code discriminator Code Discriminator min 𝐶 ℒ = ℒ 𝑐𝐺𝐴𝑁 𝐷 Refiner is trained after 75000 iterations

- 10. Experiments Refiner smooths and refines shapes visually. Generated scenes from random distribution were not realistic Generated from random distribution FC-VAE 3D FCR-alphaGAN Reconstruction Real Reconstruction Almost reconstructed, but small shapes have disappeared. Before Refine After Refine This architecture worked better than just VAE, but it’s not enough. This is because encoder was not generalized to the distribution Before Refine After Refine

- 11. Result Numerical evaluation of reconstruction by IoU Intersection-over Union(IoU) [6] Reconstruction accuracy got high due to the fully convolution and alphaGAN IoU for every class IoU for all Same number of latent space dimension Same number of latent space dimension

- 12. Evaluations Interpolation Smooth transition between scenes are built

- 13. Evaluations Latent Space Evaluation The 2D represented mapping by SVD of 200 encoded samples Color:1D embedding by SVD of centroid coordinates of each scene Fully convolution Standard VAE Fully Convolution enables the latent space to be related to spatial context This follows 1d embedding of centroid coordinates from lower right to upper left. This does not.

- 14. Evaluations Latent space evaluation by added noise The effects of individual spatial dimensions composed of 5x3x5 as the latent space. Red means the level of changes given by normal distribution noises of one dimension. ・2,0,4 dimension changes objects in right back area. ・4,0,1 dimension changes objects in left front area. ・1,0,0 dimension changes objects in left back area. ・4,0,4 dimension changes objects in right front area. Fully Convolution enables the latent space to be related to spatial context

- 15. Suggestions of Future Work ・Revise the dataset This dataset is extremely sparse and has plenty of varieties. Floors and small objects are allocated to huge varieties of positions, also some of the small parts like legs of chairs broke up in the dataset because of the downsizing. That makes predicting latent space too hard. Therefore, it is an important work to revise the dataset like limiting the varieties or adjusting the positions of objects. ・Redefine the latent space In this work, I defined the latent space with one space which includes all information like shapes and positions of each object. Therefore, some small objects disappeared in the generated models, and a lot of non-realistic objects were generated. In order to solve that, it is an important work to redefine the latent space like isolating it to each object and layout. However, increasing the varieties of objects and taking account into multiple objects are required in that case.