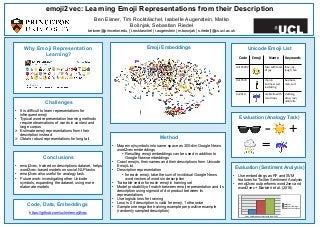

emoji2vec: Learning Emoji Representations from their Description

SocialNLP 2016 @ EMNLP Paper: https://arxiv.org/abs/1609.08359 Code & Embeddings: https://github.com/uclmr/emoji2vec Many current natural language processing ap- plications for social media rely on represen- tation learning and utilize pre-trained word embeddings. There currently exist several publicly-available, pre-trained sets of word embeddings, but they contain few or no emoji representations even as emoji usage in social media has increased. In this paper we re- lease emoji2vec, pre-trained embeddings for all Unicode emoji which are learned from their description in the Unicode emoji stan- dard.1 The resulting emoji embeddings can be readily used in downstream social natu- ral language processing applications alongside word2vec. We demonstrate, for the down- stream task of sentiment analysis, that emoji embeddings learned from short descriptions outperforms a skip-gram model trained on a large collection of tweets, while avoiding the need for contexts in which emoji need to ap- pear frequently in order to estimate a represen- tation.

Recomendados

Recomendados

Más contenido relacionado

Destacado

Destacado (14)

Más de Isabelle Augenstein

Más de Isabelle Augenstein (17)

Último

Último (20)

emoji2vec: Learning Emoji Representations from their Description

- 1. Ben Eisner, Tim Rocktäschel, Isabelle Augenstein, Matko Bošnjak, Sebastian Riedel beisner@princeton.edu, {t.rocktaschel | i.augenstein | m.bosnjak | s.riedel}@cs.ucl.ac.uk emoji2vec: Learning Emoji Representations from their Description Method • Map emoji symbols into same space as 300-dim Google News word2vec embeddings • Resulting emoji embeddings can be used in addition to Google News embeddings • Crawl emojis, their names and their descriptions from Unicode Emoji List • Description representation • for each emoji, take the sum of invididual Google News word vectors of words in description • Trainable vector for each emoji in training set • Model probability of match between emoji representation and its description using sigmoid of dot product between its representations • Use logistic loss for training • Loss is 0 if description is valid for emoji, 1 otherwise • Sample one negative training example per positive example (randomly sampled description) Why Emoji Representation Learning? Challenges • It is difficult to learn representations for infrequent emoji • Typical word representation learning methods require observations of words in context and large corpus Ø Estimate emoji representations from their description instead Ø Obtain robust representations for long tail Evaluation (Analogy Task) Evaluation (Sentiment Analysis) • Use embeddings as RF and SVM features for Twitter Sentiment Analysis • emoji2vec outperforms word2vec and word2vec + Barbieri et al. (2016) 57.5 58 58.5 59 59.5 60 60.5 61 3-way classifica4on accuracy with linear SVM word2vec word2vec + Barbieri word2vec + emoji2vec Conclusions • emoji2vec, trained on descriptions dataset, helps word2vec-based models on social NLP tasks • emoji2vec also useful for analogy task • Future work: investigating other Unicode symbols, expanding the dataset, using more elaborate models Unicode Emoji ListEmoji Embeddings Code, Data, Embeddings https://github.com/uclmr/emoji2vec Code Emoji Name Keywords U+1F6002 face with tears of joy face, joy, laugh, tea U+1F574 man in business suit levitaAng business, man, suit U+2614 umbrella with rain drops clothing, drop, rain, umbrella − + =